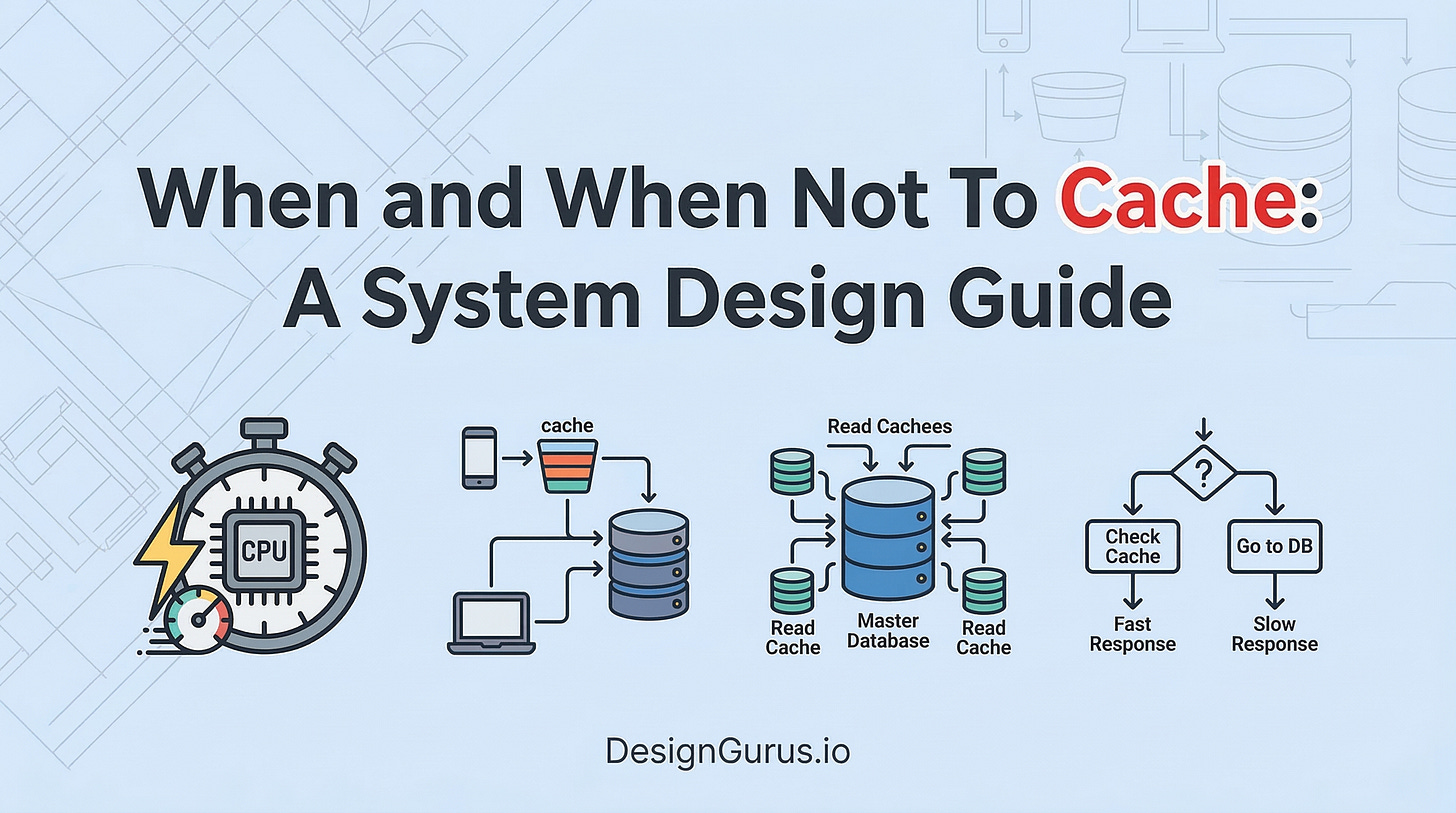

When and When Not To Cache: A System Design Guide

AMaster caching strategies for your system design interview. Learn exactly when and when not to use a cache, how to manage stale data with TTL, and understand cache eviction policies like LRU and LFU.

Software applications frequently process massive amounts of incoming data requests. When web traffic surges unexpectedly, the primary database struggles to process every single query in real time. The entire system slows down dramatically, causing basic operations to stall and timeout.

This performance degradation is a critical architectural problem that engineering teams must solve to maintain system stability.

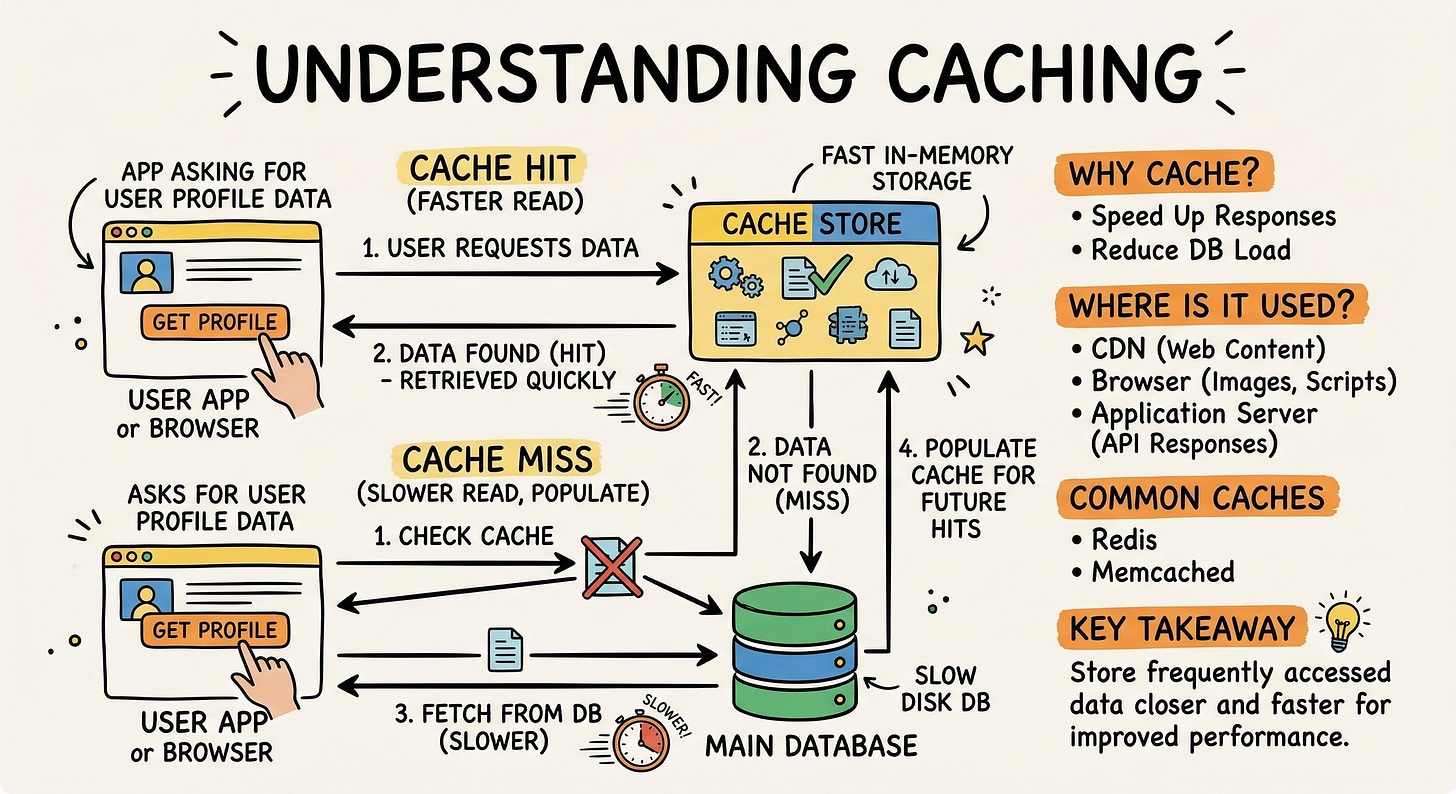

To solve this data retrieval delay, engineers introduce a specialized memory storage layer known as a cache.

Caching places frequently requested data in fast physical memory to skip slow database queries entirely.

Instead of searching a physical hard drive, the system instantly grabs the duplicate copy from active memory. This fundamental distributed systems concept accelerates application speed and protects primary databases from crashing under heavy load.

Behind the scenes, this process follows a strict sequence of events. An application receives a data request for a specific user profile. It first checks the fast memory layer for this exact profile.

If the profile exists in memory, a cache hit occurs, and the data is returned instantly.

If the profile is missing, a cache miss occurs. The application must then query the primary database to find the accurate information. Once the database returns the profile, the application saves a duplicate copy into the fast memory layer.

Finally, the application returns the data to the originating request.

Adding a memory layer without a clear architectural strategy leads to complex system bugs. Developers often start storing every single piece of data in active memory as a quick fix.

This reflex creates hidden architectural flaws, bloats system resources, and serves outdated information. Understanding exactly when to use this technique is essential for building highly reliable software.

Evaluating Data Access Frequency

The first technical factor to evaluate is the frequency of data access. Every piece of data has a unique access pattern within a system architecture.

Engineers must evaluate the ratio of how often data is read compared to how often it is written.

High Read Volumes

A high read to write ratio indicates an excellent candidate for fast memory.

Consider a software service that serves static configuration settings to thousands of connected client devices.

The system writes the configuration file once, but clients read it millions of times a day. Storing this configuration in fast memory prevents millions of redundant database queries.

The true value of a memory layer is measured by how much traffic it absorbs. Absorbing traffic prevents the primary database from becoming overwhelmed by concurrent connections.

Data that is requested repeatedly by multiple components provides the highest return on investment for memory usage. Fast memory excels at handling high volume, repetitive read operations.

Low Read Volumes

If a system writes data frequently but rarely reads it, placing it in fast memory is completely useless. The memory layer will simply consume physical resources storing data that no component ever requests. Fast memory space is highly limited and significantly more expensive than standard database disk space.

Keep reading with a 7-day free trial

Subscribe to System Design Nuggets to keep reading this post and get 7 days of free access to the full post archives.