Batch vs. Stream Processing: How to Balance Latency and Accuracy

Understand the difference between batch and stream processing. This blog explains the Speed Layer, Batch Layer, and the modern Kappa alternative for system design students.

Designing a data system often feels like choosing between two conflicting goals. On one side, there is the need for speed. Modern applications require data to be available the moment it is generated. Latency must be low so that metrics are visible immediately.

On the other side, there is the need for absolute accuracy and historical completeness. Data arrives late, servers fail, and networks have glitches.

If a system prioritizes speed above all else, it often sacrifices the correctness of the data.

This creates a fundamental tension in system design.

High throughput and low latency usually come at the cost of data consistency or complexity.

Historically, engineers had to choose. They could build a system that gave quick, approximate answers, or they could build a system that gave slow, perfect answers.

The challenge lies in creating an architecture that handles massive scale while satisfying both requirements. We need a way to process data as it flows in for immediate insight, while simultaneously retaining a perfect record for deep analysis later.

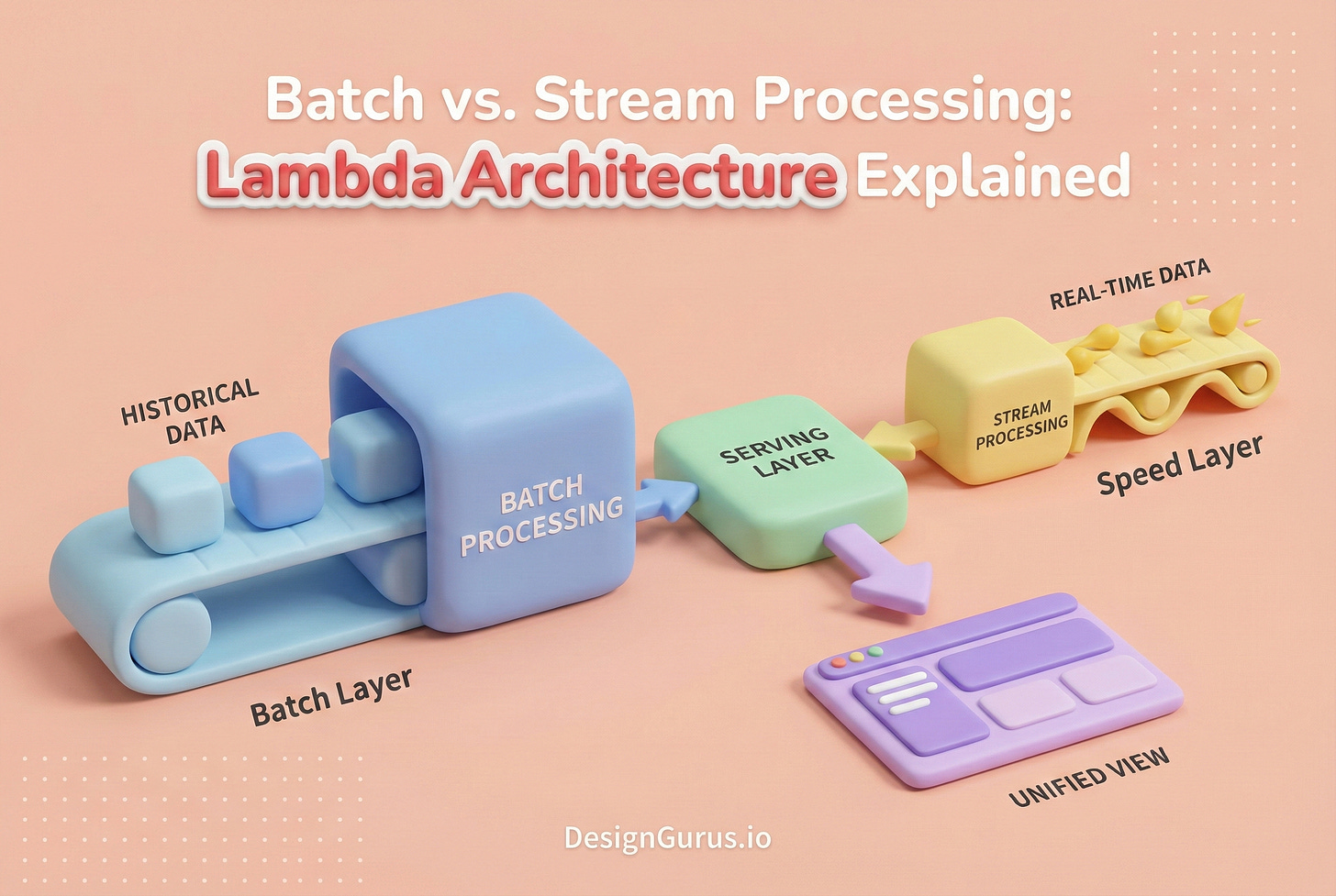

This is where the concepts of Batch Processing, Stream Processing, and the Lambda Architecture come into play.

The Core Data Challenge: Latency vs. Accuracy

To understand why hybrid architectures exist, we must first look at the two primary ways computers process large volumes of data.

Understanding the strengths and weaknesses of each approach is essential for any system design candidate.

Batch Processing

Batch processing is the traditional method for managing large volumes of data.

In this model, data is not processed as soon as it enters the system.

Instead, the system collects data over a period of time. This collection forms a bounded group of data, known as a batch.

Once enough data has accumulated, or a specific time interval has passed, the system processes the entire block of data at once. This approach allows for high efficiency.

The computer can optimize how it reads and writes information because it has the full dataset available. It can sort, filter, and aggregate with a complete view of that specific time window.

The Strength: Accuracy and Simplicity

The primary benefit of batch processing is correctness.

If a calculation fails, the system can simply restart the job for that batch.

Because the data is static (it is not moving or changing during the process), the results are deterministic. The system can also easily handle “late” data.

If a record from 1:00 PM arrives at 1:15 PM, but the batch job runs at 2:00 PM, the system simply includes that record in the calculation without any special logic.

The Weakness: High Latency

The downside is the delay. There is a significant gap between the moment data is created and the moment it is available for query. The system must wait for the data to accumulate, and then it must wait for the processing job to finish.

This delay can range from minutes to hours. In a world that demands instant feedback, this “staleness” is often unacceptable.

Stream Processing

Stream processing takes the opposite approach. In this model, data is viewed as an unbounded flow of individual events.

Keep reading with a 7-day free trial

Subscribe to System Design Nuggets to keep reading this post and get 7 days of free access to the full post archives.