The One System Design Answer That Guarantees Rejection

Learn the most common mistake junior developers make in system design interviews and how to correctly answer the scalability question.

The blog covers:

Vertical versus horizontal scaling

Physical hardware limitations explained

Removing single points of failure

Load balancing distribution logic

Stateless architecture for consistency

The transition from writing functional code to designing large-scale systems is a significant leap in an engineering career.

While coding focuses on logic, syntax, and algorithmic efficiency within a single program, system design focuses on the architecture required to run that program for millions of users simultaneously. This shift requires a fundamental change in how hardware and resource management are perceived.

Engineers often approach problems with a mindset of optimization. They look for the most direct way to increase performance.

However, in the context of system design, the most direct solution is often the one that leads to critical architectural failures when placed under the stress of high traffic.

The Interview Scenario

In a standard System Design Interview (SDI), the interviewer will almost always present a scenario involving rapid growth.

The prompt will describe a service that has successfully gained traction.

The number of active users is increasing exponentially, and the data volume is skyrocketing. The current infrastructure, which likely consists of a standard web server and a database, is becoming slow and unresponsive.

The interviewer will then ask a pivotal question regarding how to handle this increased load.

The answer provided at this moment is a strong indicator of the candidate’s seniority and understanding of distributed systems.

The most common answer from beginners is to upgrade the existing server. They suggest replacing the current machine with one that has a faster Central Processing Unit (CPU), more Random Access Memory (RAM), and larger Solid State Drives (SSDs).

This approach is known as Vertical Scaling.

While this solution is technically valid for small-scale applications or personal projects, it is considered a wrong answer for large-scale system design.

Proposing this as the primary strategy typically results in a rejection because it ignores the fundamental constraints of hardware and the requirements of high availability.

To pass the interview and become a competent architect, one must understand why vertical scaling fails and what architecture should replace it.

The Limits of Vertical Scaling

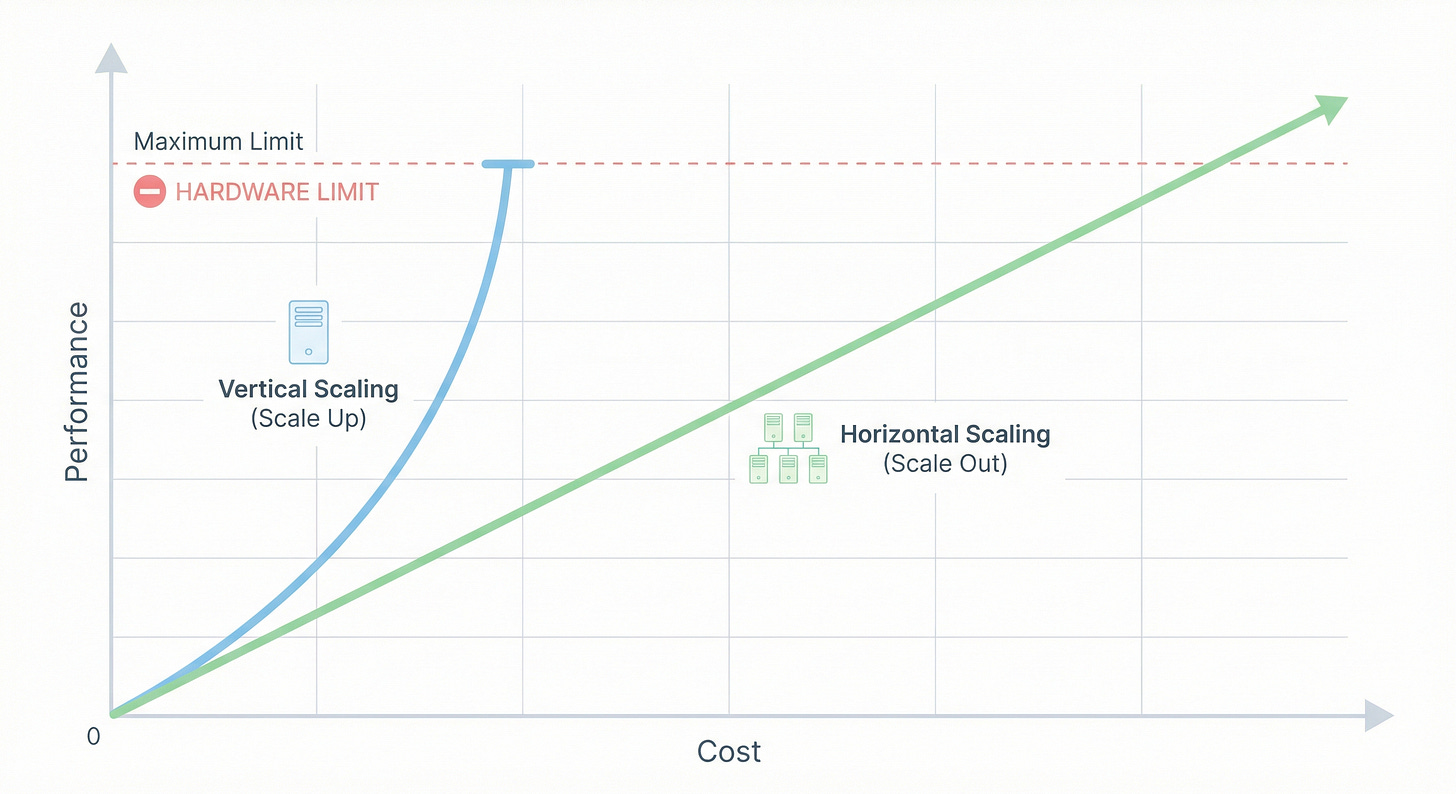

Vertical scaling is the act of increasing the compute capacity of a single node within a system.

The logic is linear.

If the software needs more memory to process user requests, adding more memory to the physical machine should solve the problem. However, this approach faces three insurmountable barriers: the physical hardware limit, the exponential cost curve, and the risk of total system failure.

The Hardware Ceiling

Computers are bound by the laws of physics and current manufacturing capabilities. There is a hard limit to how much computing power can be packed into a single motherboard.

A motherboard has a finite number of slots for RAM sticks.