The Complete Guide to Content Delivery Networks (CDNs) for System Design Interviews [2026 Edition]

Understand how CDNs work behind the scenes, how HTTP caching really behaves, and how to discuss CDNs confidently in system design rounds.

This guide covers:

CDN goals and core model

Request routing and edges

Caching rules and headers

Reliability and security patterns

Interview-ready design approach

Software applications face a massive physical limitation regarding geographical distance. Data packets traveling across global networks experience unavoidable transmission delays. These delays result in severely slow loading times and degraded application performance.

The physical distance between a centralized database and a remote device creates a fundamental bottleneck. Solving this exact distance problem is a critical requirement in large scale system design.

Understanding this topic is critical because performance dictates platform success.

If a web application takes too long to load, user retention drops instantly. Software engineers must know how to architect systems that bypass these physical limitations successfully.

A central concept used to solve this problem is the Content Delivery Network.

A Content Delivery Network is a geographically distributed group of physical servers. The core concept revolves around moving data physically closer to the end device.

If a central server is located in New York, a request originating from Tokyo must travel across the Pacific Ocean. This journey takes significant time due to the speed of light in fiber optic cables.

By placing a distribution server directly inside Tokyo, the data only travels a few local miles.

The Tokyo server securely stores a copy of the data from the New York server. When the Tokyo device requests a specific file, the local server responds immediately. The long trip across the ocean is completely eliminated.

This simple mechanism forms the backbone of the modern internet. We will explore exactly how this infrastructure works behind the scenes.

CDN Mental Model and Vocabulary

A CDN question becomes much easier when the vocabulary is stable. This section defines the words that will appear again and again in interviews.

The Core Architectural Concept

A Content Delivery Network is specifically designed to eliminate long distance network requests. It is a massive distributed infrastructure system. Companies deploy thousands of high performance servers in strategic locations around the entire globe.

The architecture relies on several distinct pieces of hardware working together. The system intercepts user traffic and redirects it to optimal locations. To understand the complete picture, we must define the specific roles of each server type.

The Origin Server

The Origin Server is the absolute source of truth for the application.

This is the primary central server managed directly by the software engineering team. It contains the core relational database and the original master files.

When developers write new code or upload new images, they deploy them strictly to the origin server.

The origin server holds the definitive version of every single digital asset. However, a single origin server has highly limited bandwidth and processing power.

It simply cannot handle millions of global requests simultaneously.

If forced to handle all traffic, the network interface will become congested and drop connections. The distributed network exists entirely to protect the origin server from this catastrophic failure.

The Edge Servers

An Edge Server is a specialized machine located at the perimeter of the network. It is called an edge server because it sits extremely close to the end users. These servers are deployed globally to cover major metropolitan population centers.

The primary job of an edge server is to store temporary copies of files.

This mathematical process is known as caching. When a user requests a file, the edge server checks its local storage drives.

If it has the file, it serves it directly to the user. This completely shields the origin server from the network traffic. Edge servers are built with massive amounts of memory to serve files instantly.

Points of Presence

A Point of Presence is a highly secure physical data center facility. These facilities house large clusters of active edge servers. Network engineers strategically build these data centers in highly connected areas.

They are typically located inside major internet exchange points.

An internet exchange point is a physical location where different internet service providers link their networks. By placing servers here, the edge network can communicate with local internet providers extremely quickly.

Inside a single facility, dozens of edge servers work together as a unified cluster. They use internal hardware load balancers to distribute incoming requests evenly.

This clustered approach ensures high availability for the local geographic region.

The Control Plane and Data Plane

A modern distributed network is logically divided into two distinct operating planes.

The Data Plane consists of the edge servers that actively handle client network traffic. The data plane is entirely responsible for receiving requests and transmitting files back to the client.

The Control Plane is the centralized management system for the distributed network. It does not process user traffic or serve files directly.

Instead, it handles all the administrative configurations and routing rules.

System administrators use the control plane to set caching rules and security policies.

The control plane then automatically pushes these rules out to every single edge server globally. It also collects telemetry data and performance metrics from the edge servers for continuous monitoring.

The Cache Model Terminologies

Cache key: the lookup key a cache uses to decide whether a stored response can satisfy a request.

At minimum, the cache key includes the request method and target URI, and many caches primarily store GET responses. A cache can also incorporate request headers into the key using the Vary header rules.

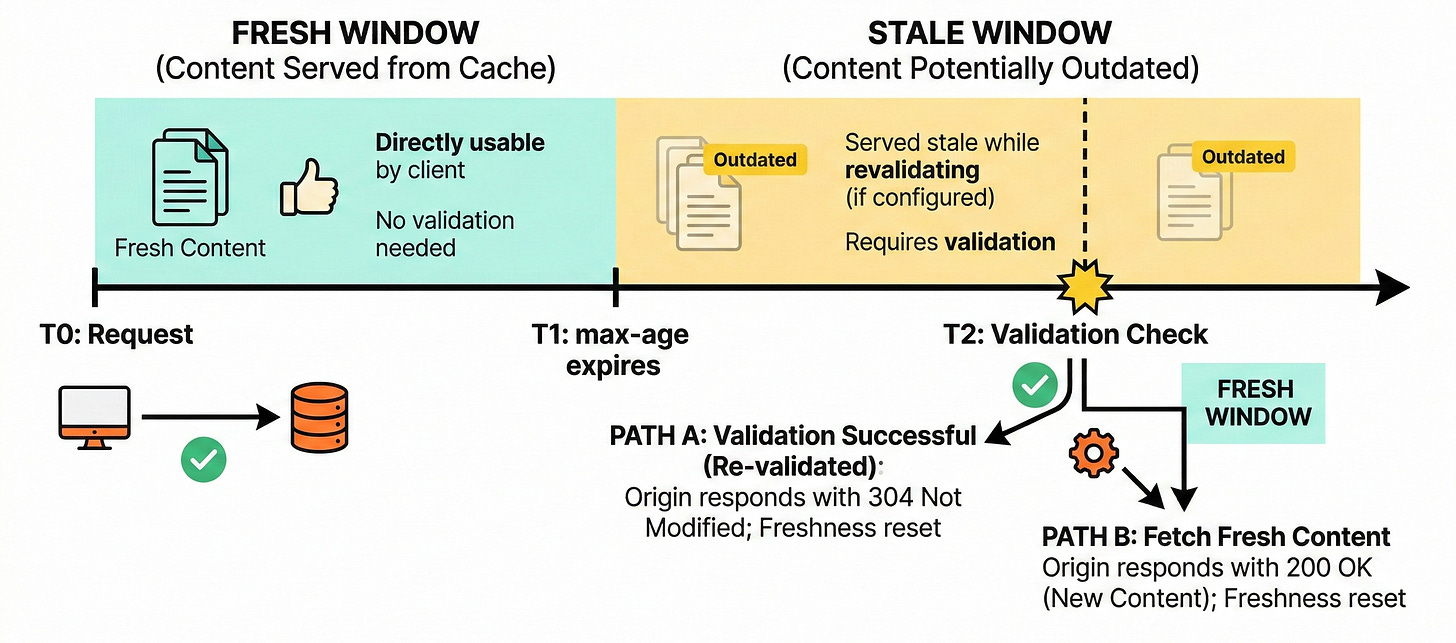

Fresh vs stale: a response is fresh until its age exceeds its freshness lifetime. After that it is stale, meaning it should not be reused without validation, unless special rules allow stale serving. The freshness model is defined by the HTTP caching spec.

TTL (time to live): in CDN talk, TTL is usually the freshness lifetime of a cached response. In HTTP, TTL-like behavior is often controlled by Cache-Control: max-age=... or Expires.

Validation: a cache can re-check a stored response with the origin using conditional requests. Common validators are ETag and Last-Modified. Conditional request headers such as If-None-Match can allow a 304 Not Modified response, saving bandwidth.

Invalidation: removal or disabling of stored responses. HTTP has rules that require caches to invalidate stored responses after unsafe methods (such as PUT, POST, DELETE) because those can change server state. CDNs also support explicit invalidation mechanisms, but that part is outside pure HTTP and is usually a managed-cache feature.