Scalability: The System Design Concept Behind Every High-Traffic App

Learn how scalable systems handle massive traffic spikes. Covers vertical vs horizontal scaling, load balancers, stateless design, read replicas, sharding, caching, message queues, microservices.

Software applications frequently collapse when a sudden wave of network traffic arrives. Every web application relies on underlying hardware to process incoming network requests.

When thousands of digital requests hit the system simultaneously, those hardware components reach their maximum capacity almost instantly. The active memory fills completely, and the central processor fails to compute new incoming data.

This hardware exhaustion causes massive service outages and dropped network connections. Building a digital architecture capable of surviving massive traffic spikes is a foundational engineering requirement.

This specific architectural discipline is known as system scalability.

Understanding scalability is absolutely critical for engineers building highly available digital platforms.

Scalability dictates whether an application survives massive growth or crashes entirely. It provides the technical framework necessary to keep software running smoothly under immense pressure.

Mastering these architectural concepts is essential for anyone entering the software engineering field today.

Understanding the Core Concept

Scalability is the structural ability of a software system to handle increasing computational workloads gracefully.

When an application receives more network traffic, a scalable architecture simply utilizes additional hardware resources. It maintains fast response times without requiring engineers to rewrite the underlying codebase.

Behind the scenes, every network request requires specific amounts of processing power and active memory.

If a server processes ten requests per second, it uses a small fraction of its hardware.

If the rate jumps to ten thousand requests per second, the hardware cannot compute everything simultaneously.

A scalable design anticipates this physical limitation beforehand.

The system dynamically expands its hardware capacity to match the incoming workload exactly. Engineers achieve this dynamic expansion by following specific architectural patterns and network configurations.

The First Approach: Vertical Scaling

Engineers traditionally solve hardware limitations using a strategy called vertical scaling.

This architectural approach is commonly known as scaling up the system. It involves shutting down the existing application server and physically replacing its internal components.

Engineers install a faster central processing unit and add significantly more memory modules.

The primary advantage of vertical scaling is absolute structural simplicity.

The application codebase remains completely unchanged because the software still operates on a single machine. It offers a very fast solution for minor performance bottlenecks in new software projects. However, vertical scaling has a strict physical ceiling that causes major issues eventually.

Hardware manufacturers can only fit a finite amount of computing power onto a single physical motherboard. Once the server reaches maximum hardware specifications, it cannot scale any further.

Furthermore, relying entirely on a single powerful server creates a massive systemic vulnerability.

If that specific physical machine experiences a hardware malfunction, the entire platform goes offline immediately.

The Ultimate Solution: Horizontal Scaling

To overcome physical hardware limitations, modern engineers utilize horizontal scaling. This advanced strategy is widely known as scaling out the architecture.

Instead of upgrading one massive computer, engineers connect dozens of standard servers together over a network. These independent machines work simultaneously to process the total incoming traffic volume.

Horizontal scaling offers virtually infinite growth potential for the software application.

If the network traffic doubles overnight, engineers simply provision and connect additional servers to the cluster. The total processing capacity increases with every single machine added to the network.

This distributed architecture also provides incredible fault tolerance for the application.

Fault tolerance is the ability of a system to continue operating despite hardware failures.

If one specific server experiences a total hardware failure, the system does not crash. The remaining healthy servers simply absorb the computational workload and continue operating normally.

Routing Traffic With Load Balancers

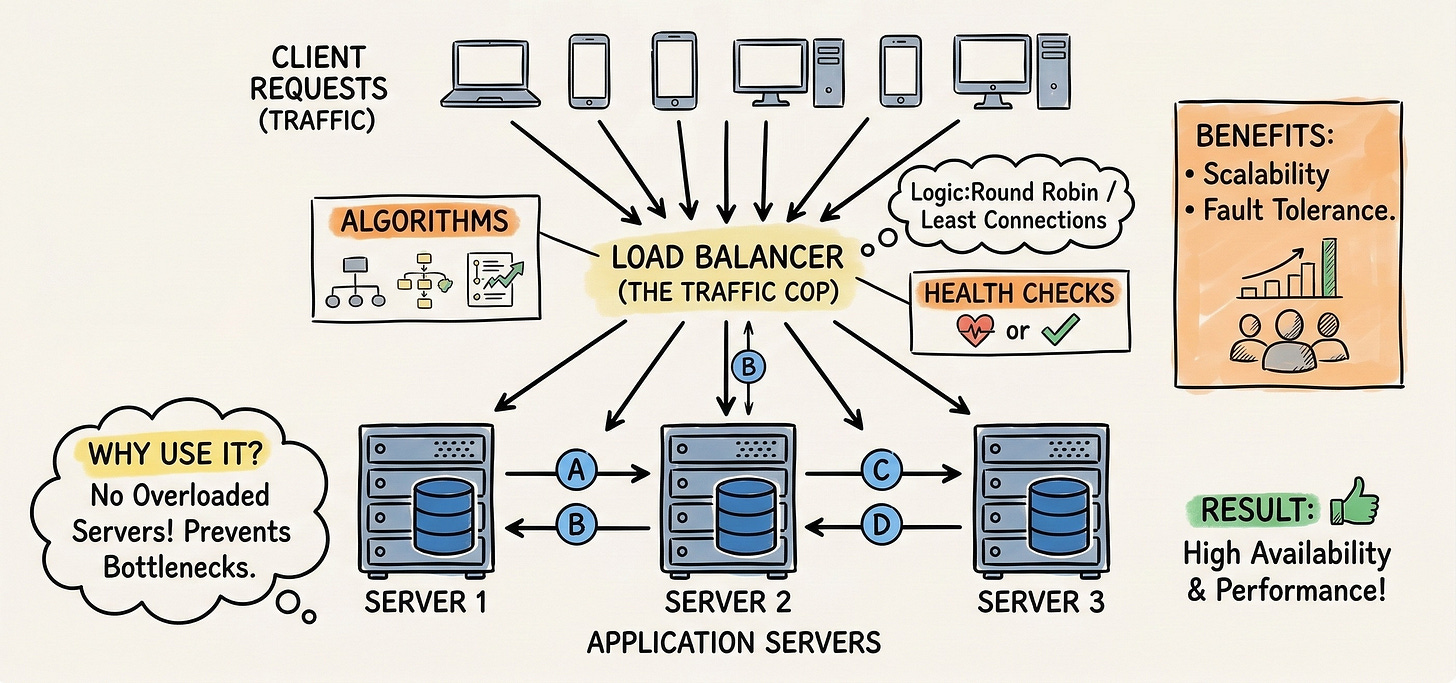

When an architecture utilizes dozens of independent web servers, client devices cannot connect to them directly. The system requires a centralized traffic director to manage the incoming network requests efficiently. This critical routing component is called a load balancer.

A load balancer acts as the single entry point for all incoming network traffic. When a client application sends a network request, it hits the load balancer first.

The load balancer then analyzes the connected server fleet and forwards the request to an available machine.

The selected web server processes the computation and returns the final response through the load balancer.

Behind the scenes, load balancers use specialized mathematical algorithms to distribute the computational workload evenly.

A basic round robin algorithm sends the first request to the first server, the second to the second, and continues sequentially.

More advanced algorithms monitor the active processor usage of every individual machine. They dynamically route incoming requests to the server demonstrating the lowest current workload.

Additionally, the load balancer continuously monitors the physical health of every connected machine. It sends automated verification signals to the server fleet every few seconds.

If a specific server fails to respond, the load balancer removes it from the active routing list immediately. It stops sending traffic to the broken machine entirely to prevent application errors.

Designing Stateless Architecture

Horizontal scaling forces engineers to change how servers manage temporary session data. This temporary data is known as the state of the application.

Keep reading with a 7-day free trial

Subscribe to System Design Nuggets to keep reading this post and get 7 days of free access to the full post archives.