Preventing Cascading Failures: How to Decouple Microservices with Async Design

Discover how blocking and non-blocking operations impact server performance and learn how message queues decouple microservices.

Software applications frequently freeze or crash completely when sudden traffic spikes occur. This catastrophic failure usually happens because internal server components get stuck waiting for network data.

A single slow database can force every connected server to halt operations indefinitely. This waiting period consumes limited system memory and eventually brings the entire infrastructure offline.

Understanding how data moves across a network is critical for building resilient software.

Engineers must choose the exact correct communication model to prevent these systemic traffic bottlenecks. The chosen architecture dictates whether an application survives massive surges or collapses entirely.

The Core Problem in Distributed Systems

Early software applications were typically built as single monolithic structures. In a single program, different functions call each other directly within the active system memory.

This internal communication is incredibly fast and highly reliable. There is no external network involved, which makes data transfer virtually instant.

Modern software development utilizes a microservices architecture.

This approach splits a large application into dozens of smaller, independent servers.

While this makes writing code easier, it introduces a massive new problem. Functions no longer live in the exact same memory space.

When a component needs information, the data must travel over physical network cables. This physical travel introduces a delay known as network latency. It also introduces the constant risk of hardware failure, dropped data packets, or overloaded destination servers.

System architects must design communication pathways that can survive these inevitable network failures.

The Hidden Cost of Waiting

Sending data over a network always takes a specific amount of time.

The fundamental problem in system design is deciding what the software should do during this waiting period.

The software can either wait patiently for the network request to finish or move on.

If a system handles this waiting period poorly, it will crash when multiple users connect simultaneously. Developers must actively choose between forcing the system to wait or allowing the system to perform other tasks.

We categorize these two distinct approaches as synchronous and asynchronous communication.

Let us dive deeply into how both of these methods work behind the scenes. We will explore exactly how they manage server memory and processing power to keep applications running smoothly.

Understanding Synchronous Communication

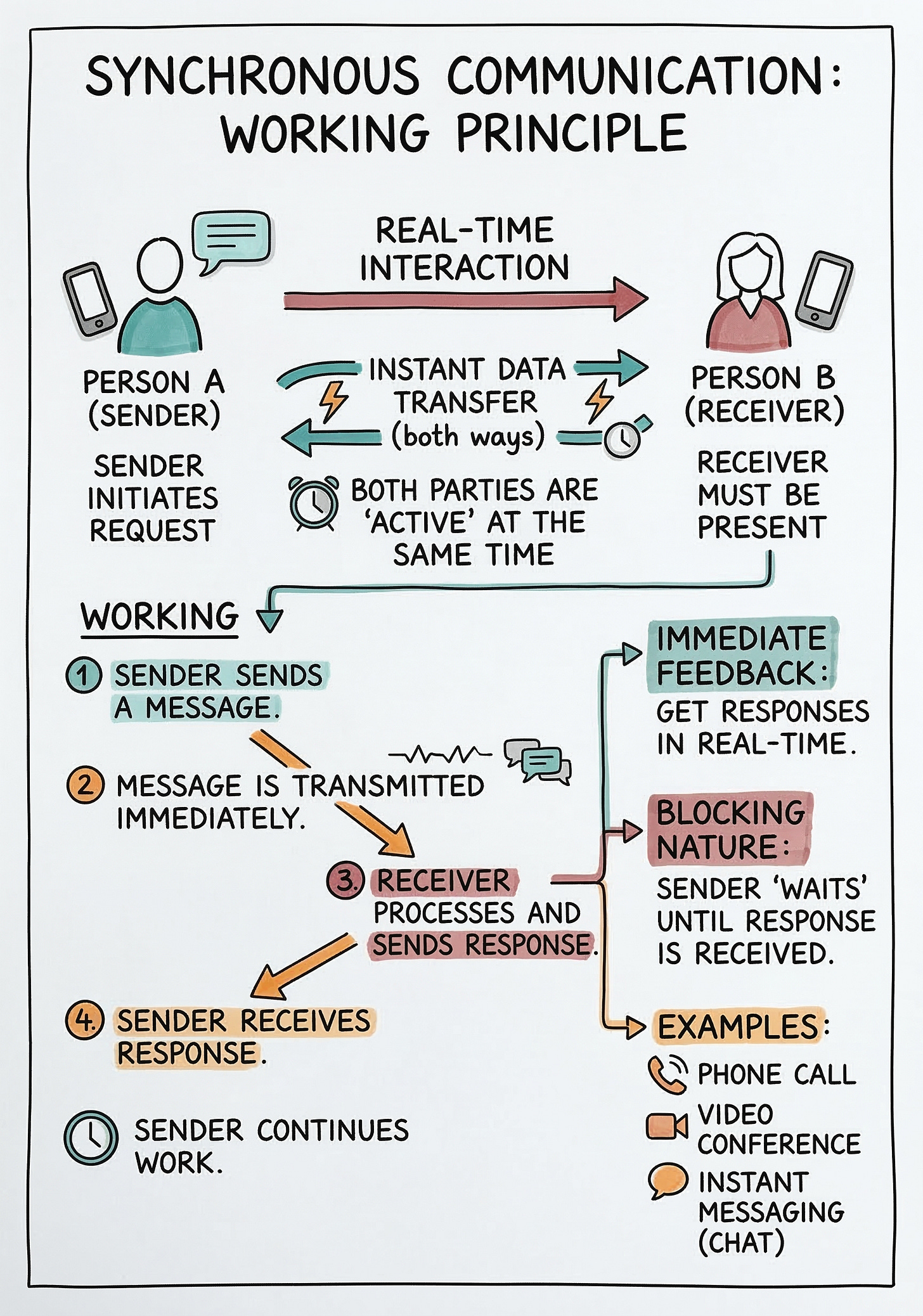

Synchronous communication means that operations happen in a strict and sequential order.

When a software service sends a request to another service, the sender stops everything else.

The sender waits patiently until it receives a complete response from the receiving server.

Only after receiving the final response will the sender move on to execute the next line of code. We call this specific behavior a blocking operation.

The computational progress of the sending application is entirely blocked from moving forward. The software simply cannot perform any other tasks until the current network operation concludes.

How Threads Work Behind the Scenes

To understand why blocking causes system delays, we must look at how servers process tasks.

A server uses a thread to handle incoming computational work.

A thread is simply a small sequence of instructions that the computer processor executes.

A standard server only has a strictly limited number of these threads available at any given time. When a request arrives, the server assigns a single thread to handle that exact request.

If the code requires complex data from a separate database, the thread initiates a network call.