Why Caching Will Fail Your System Design Interview (And What to Do Instead)

Stop failing system design interviews by overusing caches. Learn how to fix high latency at the root using database indexing, read replicas, materialized views, and asynchronous processing.

Software applications inevitably face performance bottlenecks as data volume increases.

A database query that initially executed in milliseconds might suddenly require several seconds to complete.

This delay creates a severe technical issue known as high latency.

High latency stalls application servers and degrades overall system functionality.

When a database struggles to return information quickly, developers often seek immediate relief. The initial reaction of many developers is to introduce a fast memory layer into the architecture.

This fast memory layer intercepts data requests and serves information faster than a traditional storage drive. While this approach seems perfectly logical, it frequently masks deeper structural flaws.

Adding temporary memory layers introduces massive complexity and creates new points of failure. System architecture becomes highly fragile when memory acts as a bandage for poorly optimized databases.

Understanding the root cause of slow queries is absolutely necessary for building robust software.

Engineers must learn how to diagnose latency at the foundational level. Relying on superficial fixes prevents developers from understanding true system design.

Mastering internal database mechanics separates average programmers from senior architectural engineers.

We will explore the exact reasons why memory storage fails and the techniques used instead.

The Mechanics of Memory Storage

To understand why systems become fragile, we must examine how temporary storage operates.

Engineers define Latency as the total time required for a system to process a request and return a response.

When latency is high, applications feel sluggish and unresponsive. The fundamental bottleneck usually lies within the main database.

Standard databases write information to permanent storage drives to ensure data safety. Reading information from these physical or virtual disks involves inherent electronic delays.

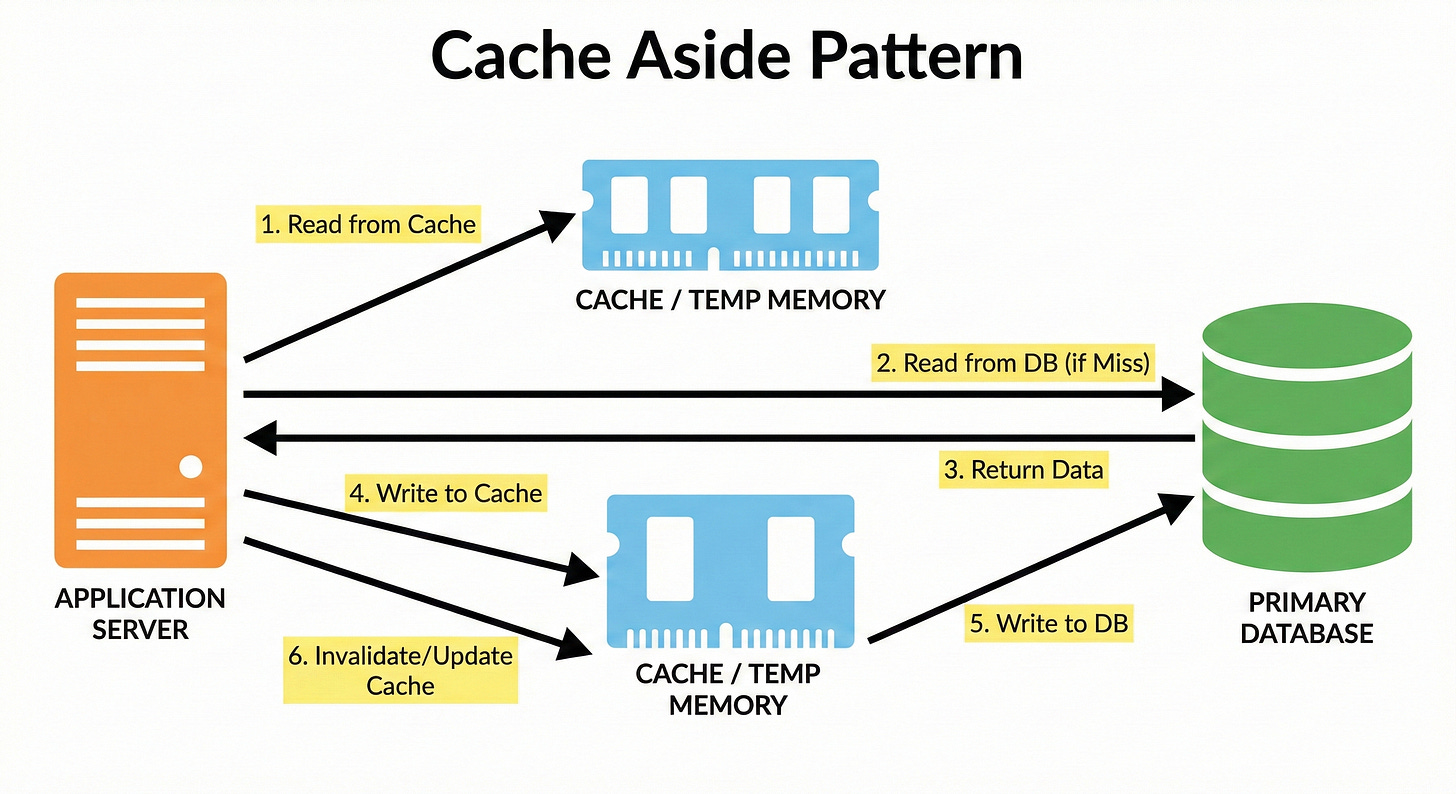

To bypass these delays, developers implement a Cache.

A cache is a high speed storage layer that holds a duplicate copy of frequently accessed data.

This caching layer stores data strictly in Random Access Memory, commonly known as RAM.

Accessing RAM is exponentially faster than reading from a permanent hard drive. When an application needs data, it checks the cache first.

If the data exists in memory, the application skips the database entirely.

This successful retrieval from memory is called a Cache Hit.

If the data is missing, the application queries the main database. This failure to find data in memory is called a Cache Miss. The application takes the returned information and saves a copy into the cache.

This duplicate copy ensures the next identical request will be fast.

This standard software flow is known as the Cache Aside Pattern. It looks like a perfect solution for speeding up slow application queries. However, this pattern introduces severe technical debt into the system architecture.

Why Caching Makes Systems Fragile

While the cache aside pattern improves reading speeds, it fundamentally changes the system architecture. Data now lives in two completely separate network locations. Managing duplicate data across multiple systems introduces severe technical challenges.

These challenges often cause more system downtime than the original slow database queries.

The Nightmare of Stale Data

The hardest challenge in system design is Cache Invalidation.

Invalidation is the programmatic process of deleting or updating memory data when the main database changes.

If a record updates in the main database, the cache must also update immediately.

If the synchronization fails, the cache continues holding obsolete information indefinitely.

This obsolete information is called Stale Data.

Keep reading with a 7-day free trial

Subscribe to System Design Nuggets to keep reading this post and get 7 days of free access to the full post archives.