Stop Grinding LeetCode: Master These 6 Patterns to Solve Any Problem

Stop memorizing thousands of LeetCode solutions. Master the 6 essential algorithmic patterns, including Sliding Window, Two Pointers, and Fast & Slow Pointers, to solve any coding interview question.

What if I told you that the thousands of coding interview questions out there are actually just the same 15 questions, recycled over and over again?

When you first start preparing for technical interviews, the volume of concepts feels overwhelming. You solve a problem, feel good, and then get crushed by a slight variation of it the next day. It feels like you are climbing a mountain that keeps growing.

The truth is, you don’t need to be a competitive programming genius to ace these interviews. You just need a toolkit.

Instead of learning ad-hoc solutions for specific problems, you need to master Algorithmic Patterns. These are reusable blueprints that can be applied to solve entire categories of problems.

By mastering just a few of these, you can instantly solve hundreds of LeetCode challenges without ever having seen them before.

Let’s stop guessing and start engineering. We’ll start by analyzing the mistake everyone makes: The Brute Force Trap.

The Brute Force Trap

Before we look at the patterns, we must understand what we are trying to fix.

When you first learn to code, you solve problems by checking every single possibility.

If you need to find a pair of numbers that add up to a target, the natural instinct is to pick the first number and check it against every other number. Then pick the second number and check it against every other number.

This is called a Brute Force approach.

It works, but it is inefficient.

In computer science terms, we measure efficiency using Big O Notation, which describes how execution time grows as input data increases.

A brute force approach often involves nested loops (a loop inside a loop).

If you have a list of 1,000 items, a nested loop might perform 1,000 x 1,000 operations. That is one million operations.

If the input grows to 1,000,000 items, the computation becomes impossible to finish in a reasonable time.

The patterns we will discuss allow us to solve these problems by passing through the data only once or twice, drastically reducing execution time.

Pattern 1: The Sliding Window

The Sliding Window pattern is one of the most effective techniques for processing arrays or strings. It converts strictly nested-loop problems into linear-time solutions.

The Core Concept

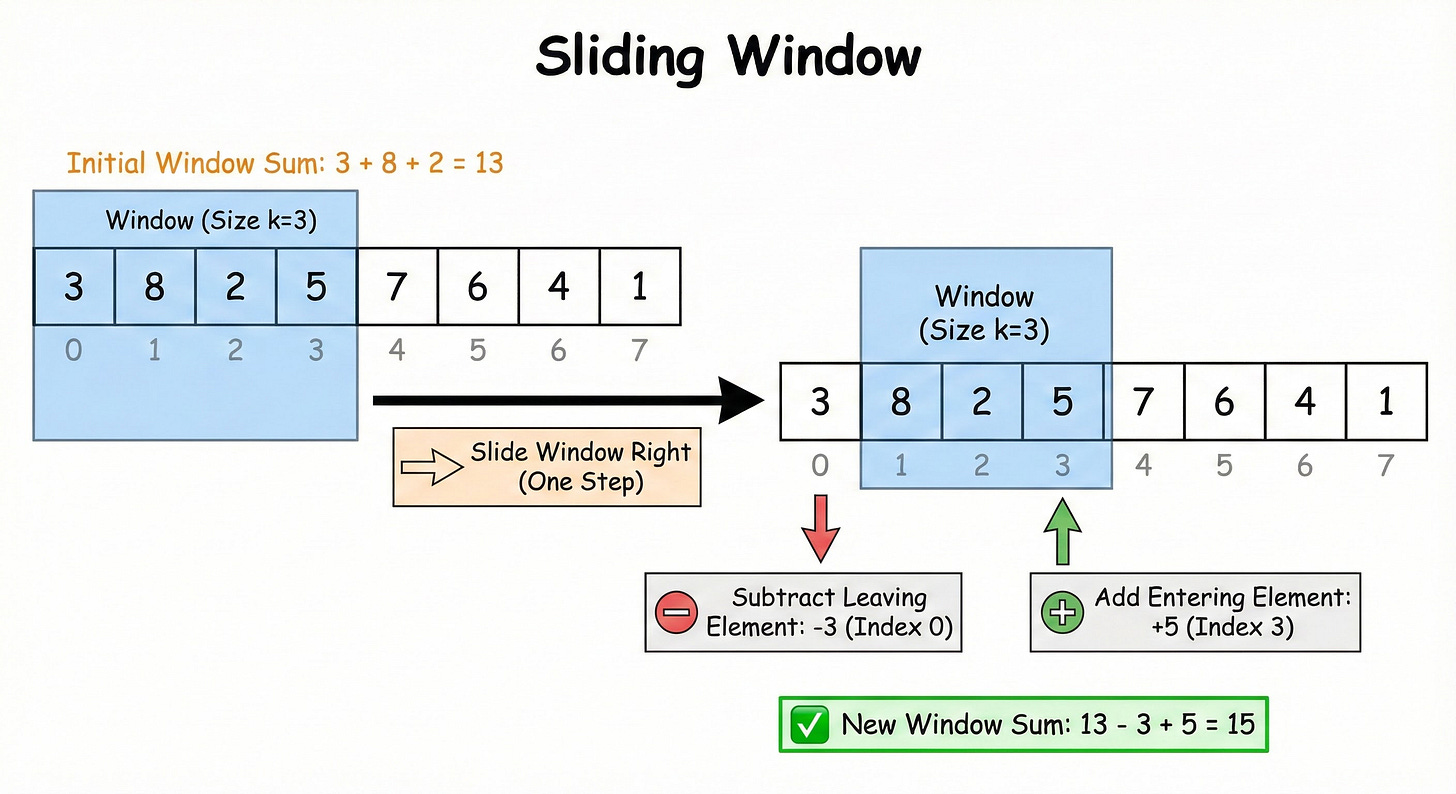

Imagine you have a long array of numbers, and you need to calculate a value based on a contiguous sub-group of these numbers. For example, finding the maximum sum of any contiguous subarray of size k.

The brute force method calculates the sum of the first k numbers. Then it moves to the second number, calculates the sum of the next k numbers, and so on. This approach re-calculates the sum of the overlapping elements repeatedly.

The Sliding Window optimizes this by maintaining a running state. It treats the sub-group as a “window” that moves across the data.

How It Works Behind the Scenes

Instead of recalculating the entire sum for every position, you only make adjustments based on the data entering and leaving the window.

Initialize: Calculate the result for the first window (indices 0 to

k-1).Slide: Move the window one index to the right.

Update: To get the new sum, you do not add all the numbers again. You simply subtract the value that just exited the window on the left and add the value that just entered the window on the right.

This reduces the operation from adding k numbers to just two simple arithmetic operations per step.

When to Use It

You should consider this pattern if the problem asks for metrics regarding a contiguous section of the data. Look for keywords like:

“Find the maximum sum of a subarray of size K.”

“Find the longest substring with distinct characters.”

“Find the smallest subarray with a sum greater than X.”

Pattern 2: Two Pointers

The Two Pointers pattern allows you to process data structures like arrays or strings by tracking two different positions simultaneously.

The Core Concept

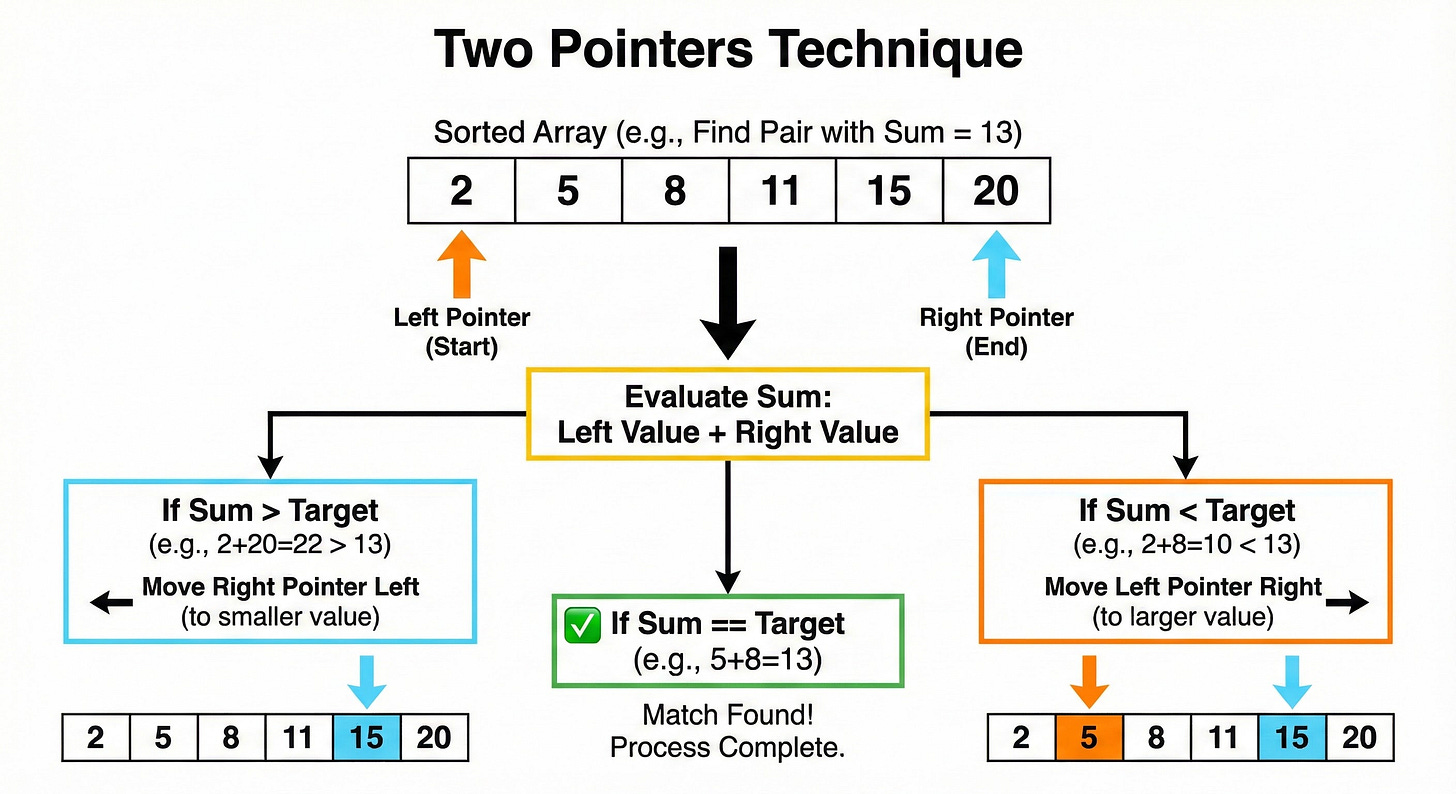

This pattern is particularly powerful when dealing with sorted arrays.

Suppose you need to find two numbers in a sorted array that add up to a specific target. A standard loop would check every combination. However, because the array is sorted, you possess extra information: numbers on the left are smaller, and numbers on the right are larger.

You can place one pointer at the very beginning (Left) and one pointer at the very end (Right).

How It Works Behind the Scenes

You evaluate the sum of the numbers at the Left and Right pointers.

Sum equals Target: You have found the answer.

Sum is too small: Since the array is sorted, you need a larger number to reach the target. The only way to increase the sum is to move the Left pointer one step to the right (to a larger value).

Sum is too large: You need a smaller number. You move the Right pointer one step to the left (to a smaller value).

You repeat this logic until the pointers meet. This technique ensures you evaluate every number at most once, guaranteeing a highly efficient solution.

When to Use It

Look for these requirements:

The input array is sorted (this is the strongest indicator).

You need to find a pair of elements that satisfy a condition.

You need to reverse an array or string (swapping the start and end elements and moving inward).

Pattern 3: Fast and Slow Pointers

This pattern is a variation of the Two Pointers method, often used to detect cycles or find specific positions in a linear data structure like a Linked List.

The Core Concept

In the previous pattern, pointers moved toward each other.

In this pattern, both pointers move in the same direction but at different speeds.

Typically, the Slow pointer moves one step at a time, while the Fast pointer moves two steps at a time.

How It Works Behind the Scenes

The most common application is detecting a cycle in a Linked List.

A cycle occurs when a node points back to a previous node, creating an infinite loop.

If you iterate through such a list normally, the program will never terminate. However, by using two pointers moving at different speeds, you can prove the existence of a cycle mathematically.

If there is no cycle, the Fast pointer will eventually reach the end of the list (null) and stop.

If there is a cycle, the Fast pointer will effectively “lap” the Slow pointer within the loop. Since the distance between them increases by one node at every step, the Fast pointer is guaranteed to catch up to the Slow pointer.

If the two pointers ever point to the same object, a cycle exists.

When to Use It

Detecting cycles in Linked Lists or Arrays.

Finding the middle element of a Linked List (when the Fast pointer reaches the end, the Slow pointer will be exactly at the middle).

Determining if a number sequence enters a repetitive loop.

Pattern 4: Merge Intervals

This pattern handles problems involving overlapping ranges, which are common in scheduling and calendar applications.

The Core Concept

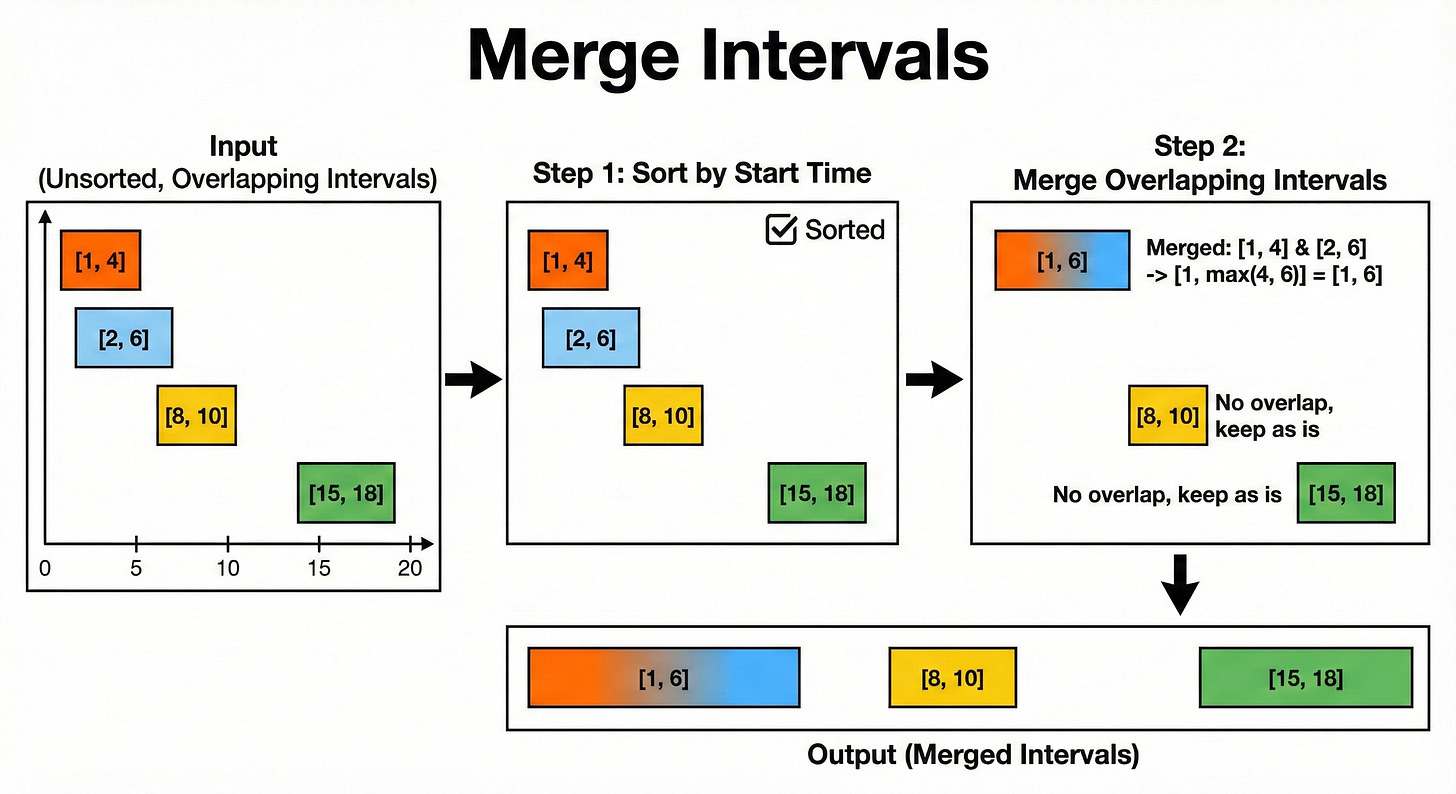

An interval has a start point and an end point.

Problems often ask you to merge overlapping intervals or insert a new one into a list.

The key to efficiently solving these problems is sorting. You almost always begin by sorting the intervals based on their start times.

How It Works Behind the Scenes

Once the list is sorted, you can iterate through it linearly.

Compare the current interval’s Start time with the previous interval’s End time.

If the current Start is less than or equal to the previous End, the intervals overlap.

You then merge them by updating the previous interval’s End time to be the maximum of both End times.

If they do not overlap, you simply move to the next interval.

Without sorting, you would have to compare every interval against every other interval, which brings you back to the inefficient brute force method.

When to Use It

“Merge all overlapping intervals.”

“Given a set of meeting times, find the minimum number of conference rooms required.”

“Insert a new time slot into a calendar.”

Pattern 5: Breadth-First Search (BFS)

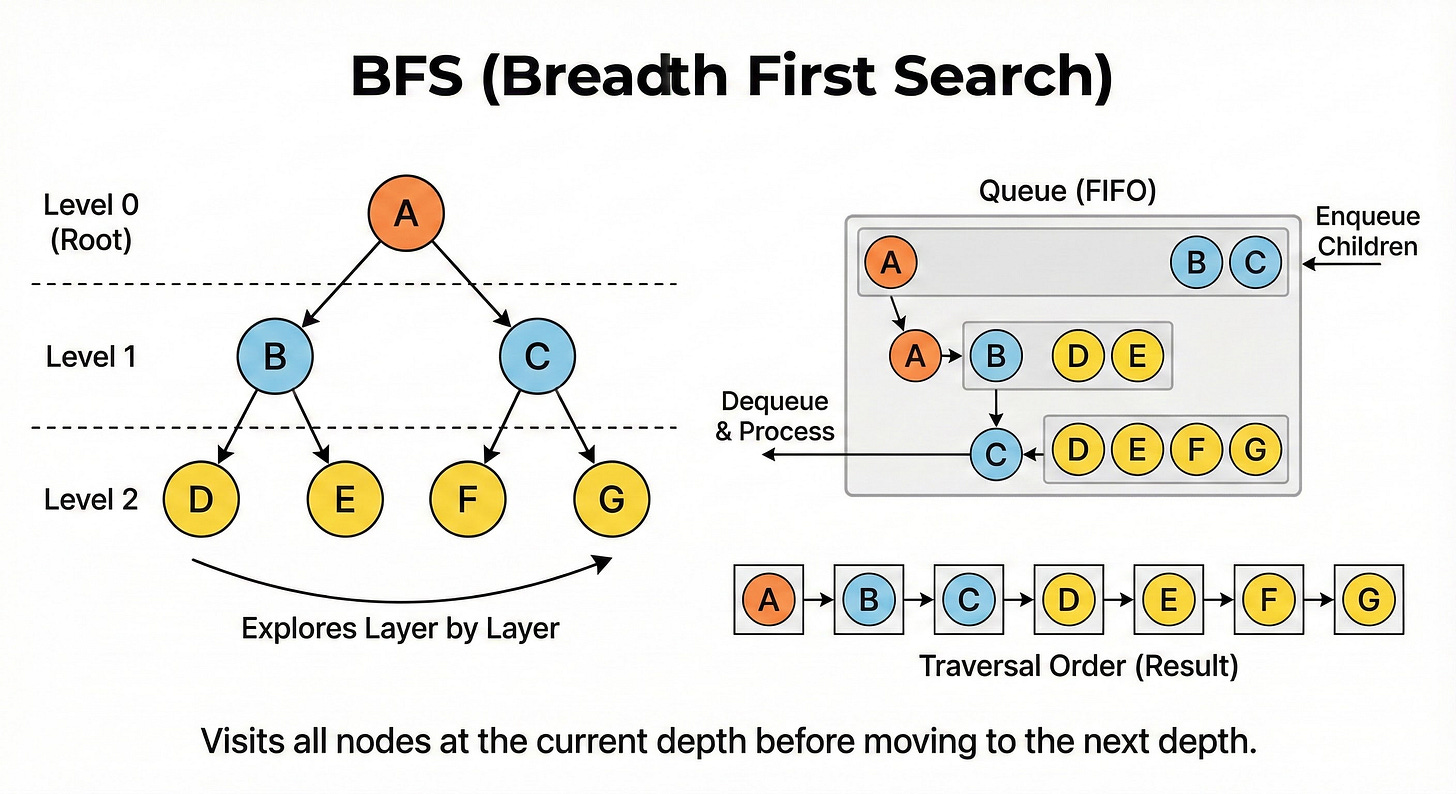

We now move from linear data to non-linear structures: Trees and Graphs.

BFS is a strategy for traversing (visiting every node of) these structures layer by layer.

The Core Concept

BFS explores the tree horizontally. It visits the root, then all immediate children of the root, then all grandchildren, and so on. It expands outward uniformly like a ripple in a pond.

How It Works Behind the Scenes

BFS relies on a Queue data structure.

A Queue operates on a First-In-First-Out (FIFO) basis.

Add the root node to the Queue.

While the Queue is not empty:

Remove (dequeue) the node at the front.

Process that node.

Add (enqueue) all of its children to the back of the Queue.

By adding children to the back, you ensure that you process all nodes at the current level before beginning to process any nodes at the next level.

When to Use It

Shortest Path: In an unweighted graph, BFS guarantees finding the shortest path between two nodes because it explores paths by length (1 edge away, then 2 edges away, etc.).

Level-order traversal of a tree.

Finding all nodes connected to a specific source.

Pattern 6: Hash Maps for Tracking

While not a movement pattern like the others, using Hash Maps (or Dictionaries) is a fundamental data organization pattern used to optimize time complexity.

The Core Concept

A Hash Map stores data in Key-Value pairs. The defining feature of a Hash Map is its lookup speed.

Finding an item in a standard array takes linear time because you must scan the list.

Finding an item in a Hash Map takes constant time. It is effectively instant, regardless of the dataset size.

How It Works Behind the Scenes

We often use Hash Maps as Frequency Counters or Index Trackers.

Consider the “Two Sum” problem again. If the array is not sorted, we cannot use Two Pointers. Instead, we can iterate through the array once.

For every number x, we calculate the required complement (Target - x). We query the Hash Map: “Does this complement exist?”

If yes: You have found the pair.

If no: Store the current number

xin the Hash Map and continue.

This allows you to solve the problem in a single pass without sorting or nested loops.

When to Use It

When you need to check for the existence of an element instantly.

When you need to count the frequency of items in a list.

When you need to match elements across different data sources.

Conclusion

Coding interviews can be hard.

The pressure is high, and the variety of questions seems endless. However, once you stop viewing each problem as a unique riddle and start seeing them as applications of these core patterns, the difficulty becomes manageable.

Here is a summary of the key takeaways:

Sliding Window: Use this for contiguous subarrays or substrings to avoid re-calculating overlapping data.

Two Pointers: Use this for sorted arrays to find pairs or invert data without using extra memory.

Fast and Slow Pointers: Use this for cycle detection in linked lists or arrays.

Merge Intervals: Sort by start time to handle overlapping ranges efficiently.

BFS: Use a Queue to find the shortest path or explore data level-by-level.

Hash Maps: Use this to track data you have seen and enable instant lookups.

Focus on understanding the logic behind these patterns.

Practice implementing them from scratch.

Once you own these tools, you will be ready for whatever the interview throws at you.