System Design 101: A Developer’s Guide to Serverless Architecture

Master Serverless Architecture for your system design interview. Learn how Function-as-a-Service (FaaS) works, & understand critical concepts like cold starts, stateless design, & connection pooling.

Deploying software traditionally requires provisioning dedicated hardware to handle expected network traffic.

Engineering teams must accurately predict compute capacity to prevent system crashes during sudden traffic spikes.

Overestimating this capacity results in expensive hardware sitting completely idle during low traffic periods. This heavy operational burden slows down feature development and drains financial budgets unnecessarily.

Understanding modern cloud computing is critical for solving these strict infrastructure bottlenecks.

The industry shift toward dynamic resource allocation entirely eliminates the need for permanent background capacity.

This technical shift fundamentally changes how software teams architect, deploy, and maintain large scale distributed systems.

Complete Guide to Serverless Architecture

Traditional backend hosting requires allocating specific hardware resources like memory and processing power beforehand.

A dedicated virtual machine must run continuously to listen for incoming network requests.

If traffic suddenly increases, the central processor easily becomes overloaded and drops connections. This computational overload causes the entire software application to fail completely.

Slow Manual Interventions

Scaling traditional setups requires significant manual intervention from operations teams.

Engineers must boot up new servers and configure complex load balancers to distribute the incoming traffic.

This manual scaling process takes considerable time to complete successfully. Often, the application crashes under heavy load long before the new hardware is fully initialized.

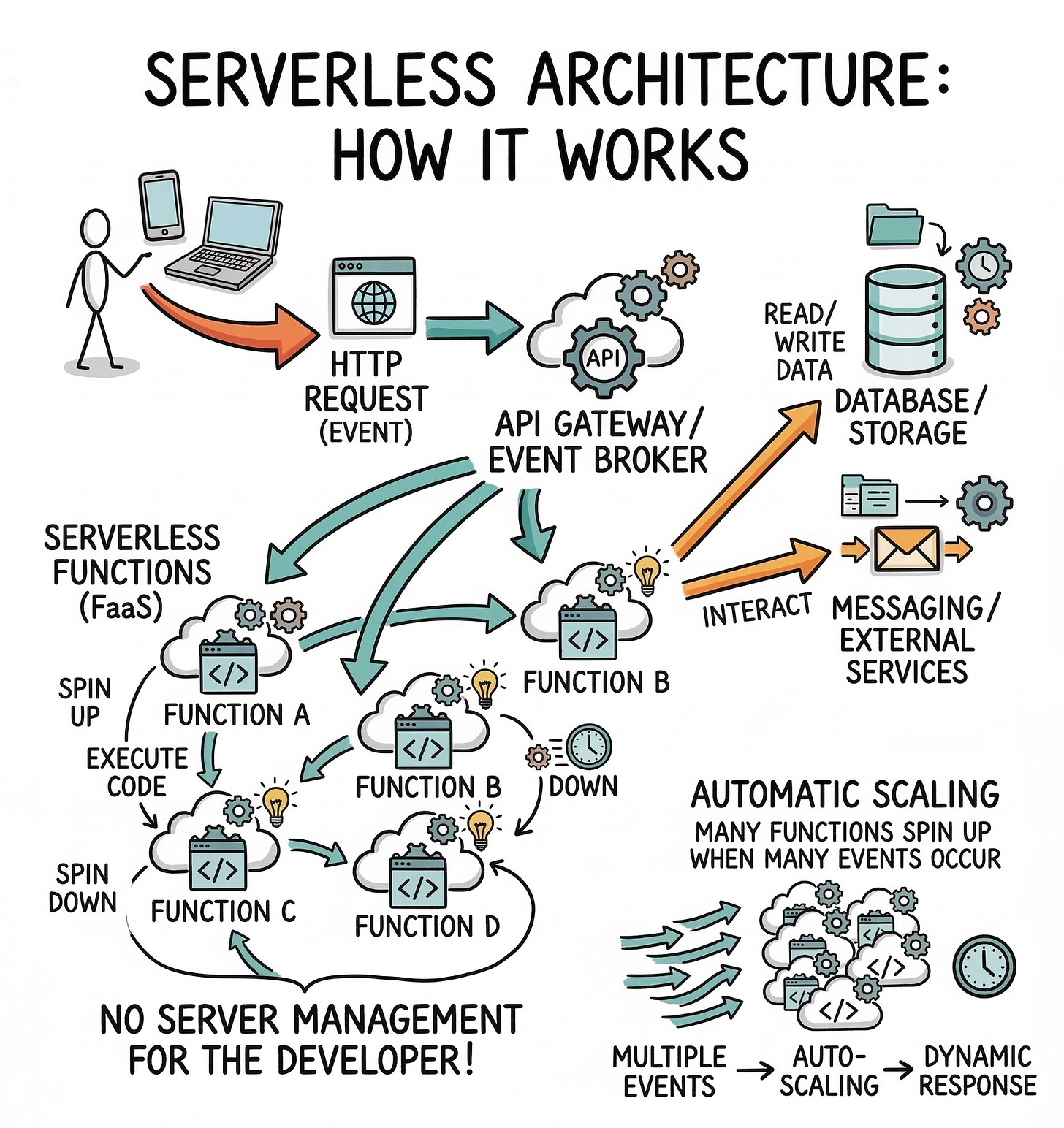

Defining Serverless Architecture

Serverless architecture is a modern execution model that solves these exact scaling problems. The name itself often causes confusion for developers learning system design concepts.

Physical hardware absolutely still exists in massive data centers around the world. The critical difference is that the software developer never interacts with the server operating system.

Abstracting Hardware Management

The cloud vendor completely abstracts the infrastructure layer away from the engineering team. The vendor takes full responsibility for applying security patches and updating operating systems.

Developers simply write their application code and upload it directly to the cloud platform.

The platform handles all resource provisioning entirely behind the scenes.

Keep reading with a 7-day free trial

Subscribe to System Design Nuggets to keep reading this post and get 7 days of free access to the full post archives.