Service Mesh From Scratch: Data Planes, Control Planes, and the Sidecar Pattern

Explore the mechanics of mTLS, circuit breaking, and traffic management within a Service Mesh architecture without complex jargon.

The transition from monolithic architectures to distributed microservices has fundamentally changed how software is designed, deployed, and scaled.

In a traditional monolithic system, different components of an application run within the same memory space.

When component A needs to send data to component B, it executes a simple function call. This process is fast, reliable, and secure because the data never leaves the hardware of the server.

However, modern system design focuses on breaking these large applications into smaller, independent services.

While this allows teams to work faster and scale specific parts of the system, it introduces a significant new challenge: the network.

In a distributed system, components must communicate over a network to function. Unlike memory function calls, the network is inherently unreliable.

Packets can be lost, latency can spike unpredictably, and connections can be intercepted. This shift forces developers to handle a complex set of reliability and security concerns that did not exist in the monolithic world.

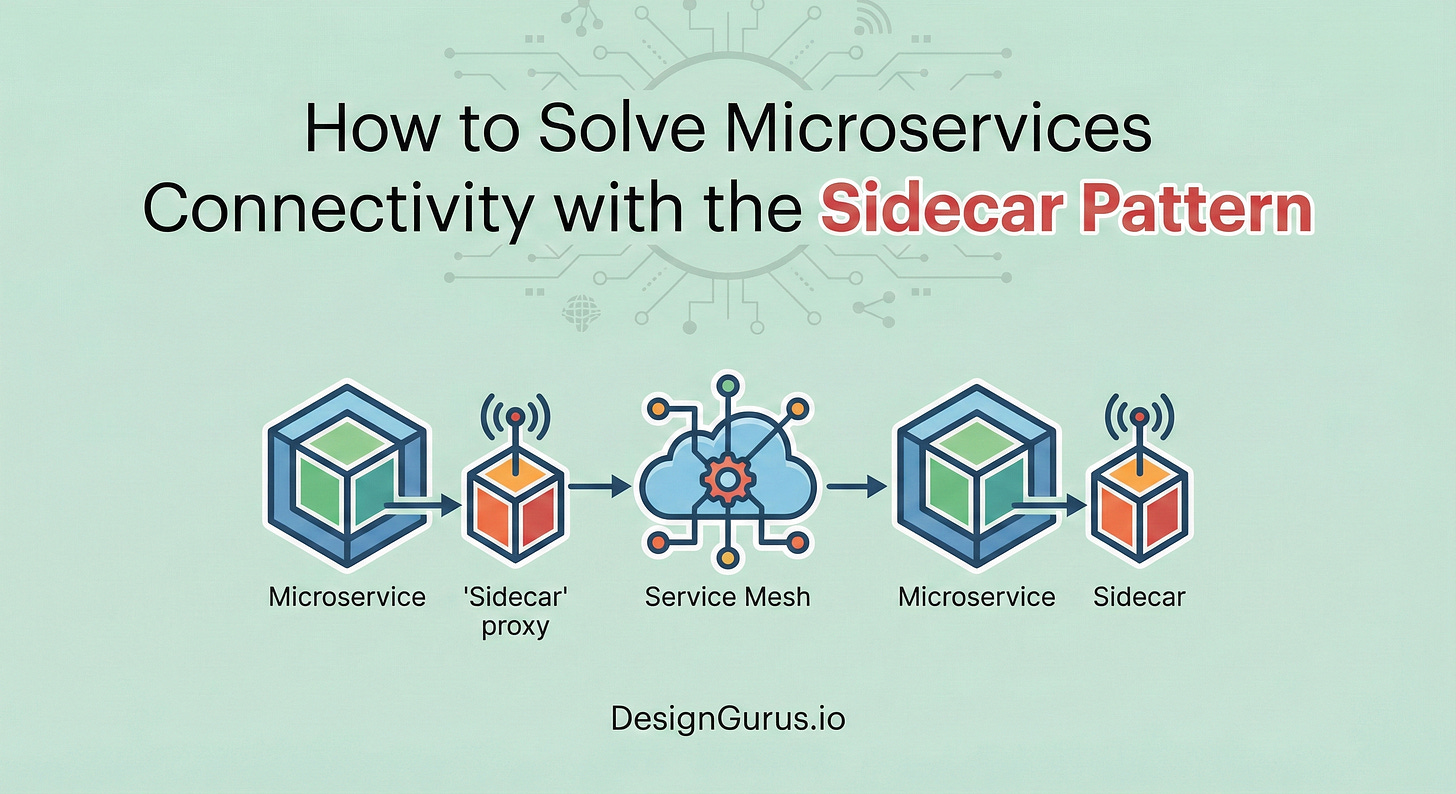

The Service Mesh and the Sidecar Pattern emerged as the standard solution to this architectural problem. They provide a mechanism to decouple the complex logic of network communication from the business logic of the application.

This ensures that developers can focus on building features while a dedicated infrastructure layer handles the reliability and security of data in transit.

The Problem: The Burden of Network Logic

To understand the necessity of a Service Mesh, it is helpful to look at how developers handled distributed networking before this pattern existed.

When a developer writes code for a service that needs to call another service, they must assume the call might fail. To make the system robust, they add logic to handle these failures. This usually involves:

Retries: If the request fails, try again three times.

Timeouts: If the request takes longer than five seconds, give up.

Circuit Breaking: If the destination is down, stop sending requests entirely for a while.

Encryption: Ensure the data is encrypted using TLS before sending it.

In the early days of microservices, this logic was written directly inside the application code, often using “fat client” libraries.

While this works for small systems, it creates massive technical debt in large-scale architectures.

If an organization runs 100 services written in different programming languages like Java, Go, and Python, they must maintain 100 different implementations of this networking logic.

If the security team decides to change the encryption standard, every single team must update their libraries and redeploy their services.

This coupling of infrastructure concerns with application code slows down development and introduces inconsistencies across the system.

The Solution: The Sidecar Pattern

The industry solved this coupling problem by moving the networking logic out of the application and into a separate, dedicated process. This architecture is known as the Sidecar Pattern.

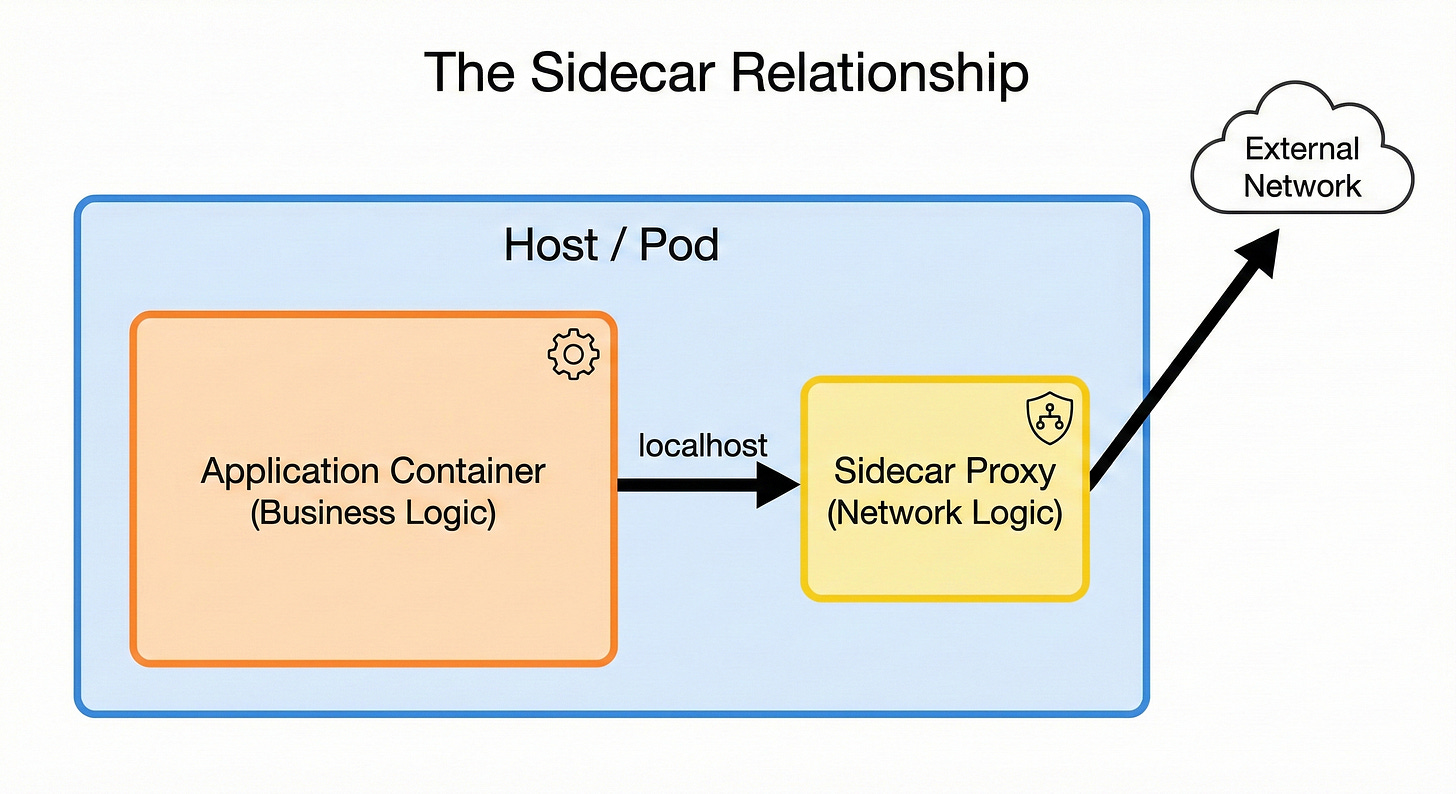

The core concept involves deploying a small, lightweight “helper” container alongside the main application container. These two containers run on the same server or within the same logical host (such as a Kubernetes Pod).

Because this helper sits immediately next to the application, it is referred to as a sidecar.

How It Works

The relationship between the main application and the sidecar is strict but simple. The application is stripped of all complex networking code. It only contains business logic.

When the application needs to send a request to another service, it does not send it to the external network.

Keep reading with a 7-day free trial

Subscribe to System Design Nuggets to keep reading this post and get 7 days of free access to the full post archives.