System Design Interview Question: Designing Uber in 45 Mins

Find out the complete 14-step process for designing Uber/Lyft in system design interviews. Covers an architecture that connects riders with drivers in real-time.

Here is a step-by-step system design for a ride-hailing platform like Uber or Lyft.

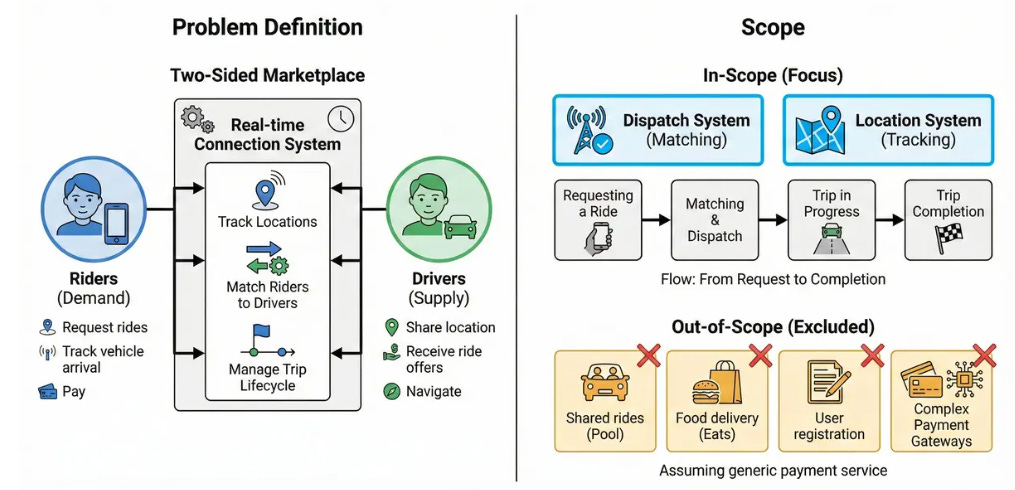

1. Problem Definition and Scope

We are designing a two-sided marketplace that connects Riders (demand) with Drivers (supply) in real-time. The system must track driver locations, match riders to nearby drivers, and manage the trip lifecycle.

Main User Groups:

Riders: Request rides, track vehicle arrival, and pay.

Drivers: Share location, receive ride offers, and navigate.

Scope:

We will focus on the Dispatch System (Matching) and Location System (Tracking).

We will cover the flow from “Requesting a Ride” to “Trip Completion.”

Out of Scope: Shared rides (Pool), Food delivery (Eats), User registration, and complex Payment Gateways (we assume a generic payment service).

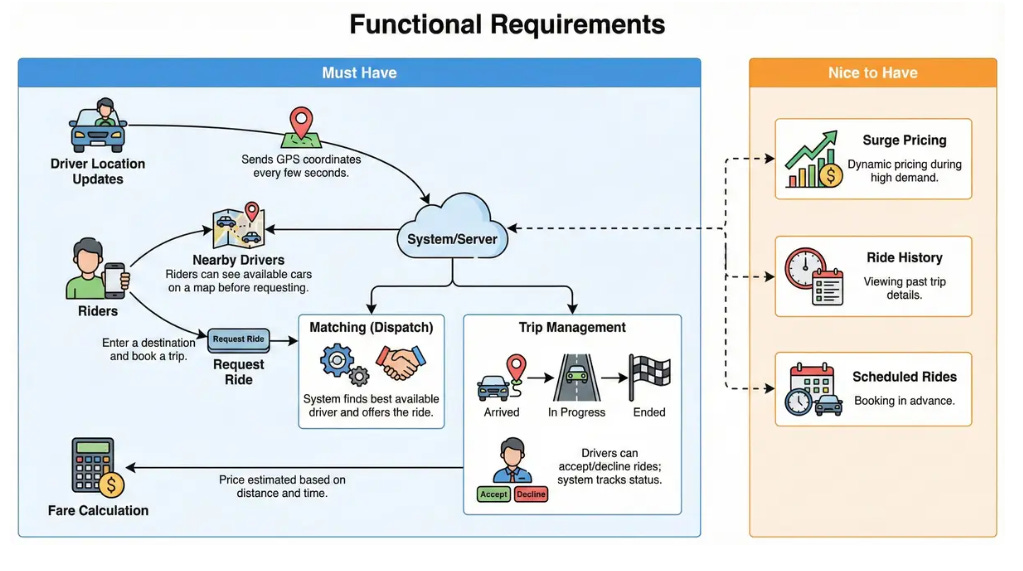

2. Clarify functional requirements

Must Have:

Driver Location Updates: Drivers send GPS coordinates every few seconds.

Nearby Drivers: Riders can see available cars on a map before requesting.

Request Ride: Riders can enter a destination and book a trip.

Matching (Dispatch): The system finds the best available driver and offers the ride.

Trip Management: Drivers can accept/decline rides; system tracks status (Arrived, In Progress, Ended).

Fare Calculation: Price is estimated based on distance and time.

Nice to Have:

Surge Pricing: Dynamic pricing during high demand.

Ride History: Viewing past trip details.

Scheduled Rides: Booking in advance.

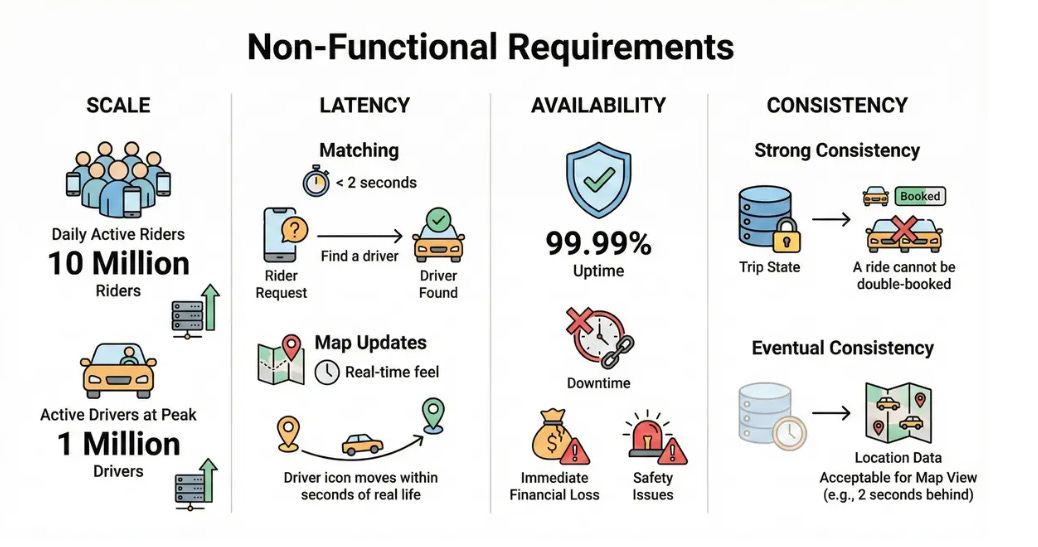

3. Clarify non-functional requirements

Scale:

10 Million Daily Active Riders.

1 Million Active Drivers online at peak.

Latency:

Matching: < 2 seconds to find a driver.

Map Updates: Real-time feel (driver icon moves within seconds of real life).

Availability: 99.99%. Downtime causes immediate financial loss and safety issues.1

Consistency:

Strong Consistency: Required for Trip State (a ride cannot be double-booked).

Eventual Consistency: Acceptable for Location Data (if the map view is 2 seconds behind, it’s acceptable).

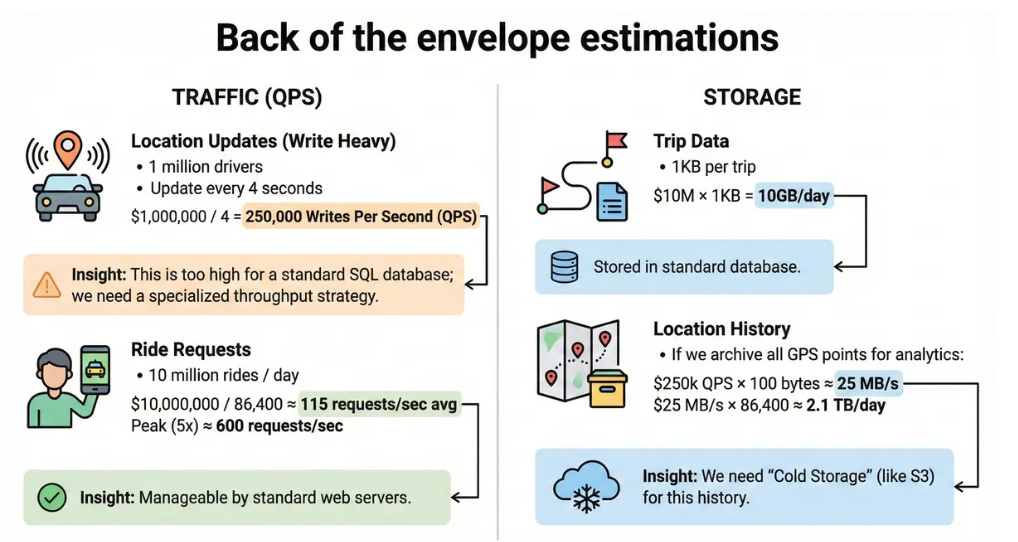

4. Back of the envelope estimates

Traffic (QPS):

Location Updates (Write Heavy):

1 million drivers.

Update every 4 seconds.

1,000,000 / 4 = 250,000 Writes Per Second (QPS).

Insight: This is too high for a standard SQL database; we need a specialized throughput strategy.

Ride Requests:

10 million rides / day.

10,000,000 / 86,400 ≈ 115 requests/sec avg.

Peak (5x) ≈ 600 requests/sec.

Insight: Manageable by standard web servers.

Storage:

Trip Data: 1 KB per trip. 10 M x 1 KB = 10 GB/day.

Location History: If we archive all GPS points for analytics:

250k QPS x 100 bytes ≈ 25 MB/s.

25 MB/s x 86,400 ≈ 2.1 TB/day.

We need “Cold Storage” (like S3) for this history.

5. API design

We use WebSockets for the high-frequency location stream and REST for transactional actions.

1. Update Location (Driver -> Server)

Protocol: WebSocket

Payload: { “lat”: 37.77, “lng”: -122.41, “status”: “available” }

Response: ACK

2. Request Ride (Rider -> Server)

Method: POST /v1/trips

Body: { “pickup_lat”: ..., “pickup_lng”: ..., “dest_lat”: ..., “service_type”: “standard” }

Response: { “trip_id”: “t123”, “status”: “matching”, “eta”: 5 }

3. Accept Ride (Driver -> Server)

Method: POST /v1/trips/{trip_id}/accept

Body: { “driver_id”: “d456” }

Response: 200 OK or 409 Conflict (if ride is no longer available).

4. Trip Status Update

Method: PATCH /v1/trips/{trip_id}

Body: { “status”: “picked_up” }

6. High-level architecture

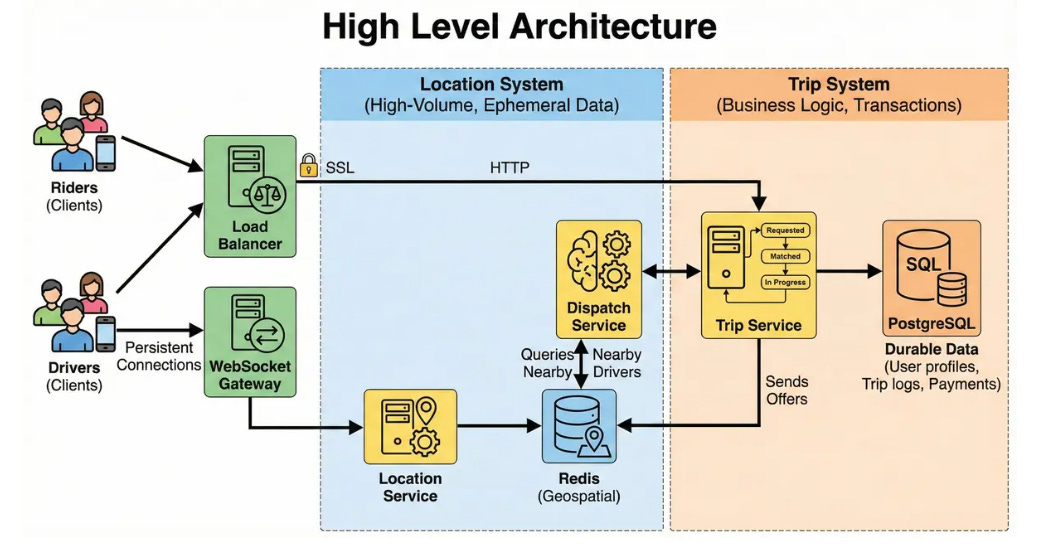

We separate the system into two distinct parts: the Location System (handles high-volume, ephemeral data) and the Trip System (handles business logic and transactions).

Components:

Load Balancer: Routes HTTP requests and terminates SSL.2

WebSocket Gateway: Maintains persistent connections with millions of drivers.

Location Service: Ingests the 250k QPS stream. Updates the cache.

Redis (Geospatial): Stores the current location of every active driver.

Dispatch Service: The “Brain.” It queries Redis to find nearby drivers and sends offers.

Trip Service: Manages the state machine (Requested -> Matched -> In Progress) and writes to SQL.

PostgreSQL: Stores durable data (User profiles, Trip logs, Payments).

7. Data model

1. Primary Database (PostgreSQL)

Used for critical data requiring ACID guarantees.

Trips Table:

trip_id (PK)

rider_id (FK)

driver_id (FK) - Nullable initially

status (Enum: REQUESTED, MATCHING, IN_PROGRESS, COMPLETED, CANCELLED)

pickup_loc, dropoff_loc

fare

created_at, updated_at

Keep reading with a 7-day free trial

Subscribe to System Design Nuggets to keep reading this post and get 7 days of free access to the full post archives.