System Design Deep Dive: Building a Distributed Tracing Infrastructure

What happens when a request hits 50 different services? We break down the architecture of tracing, from instrumentation to backend storage and visualization.

Modern software architecture has undergone a massive shift over the last decade.

The industry has largely moved away from monolithic designs, where all functional logic resided within a single application process on a single server.

In those traditional environments, understanding the behavior of the system was a relatively linear task.

If an error occurred, the relevant information was contained within a single log file, and the sequence of events was easy to follow because they shared the same memory space and timestamp reference.

However, modern systems are typically composed of dozens or even hundreds of small, independent programs known as microservices.

These services are distributed across different servers, availability zones, or containers, and they communicate over the network. This architectural shift offers immense benefits regarding scalability, fault isolation, and deployment speed, but it introduces a severe penalty regarding visibility.

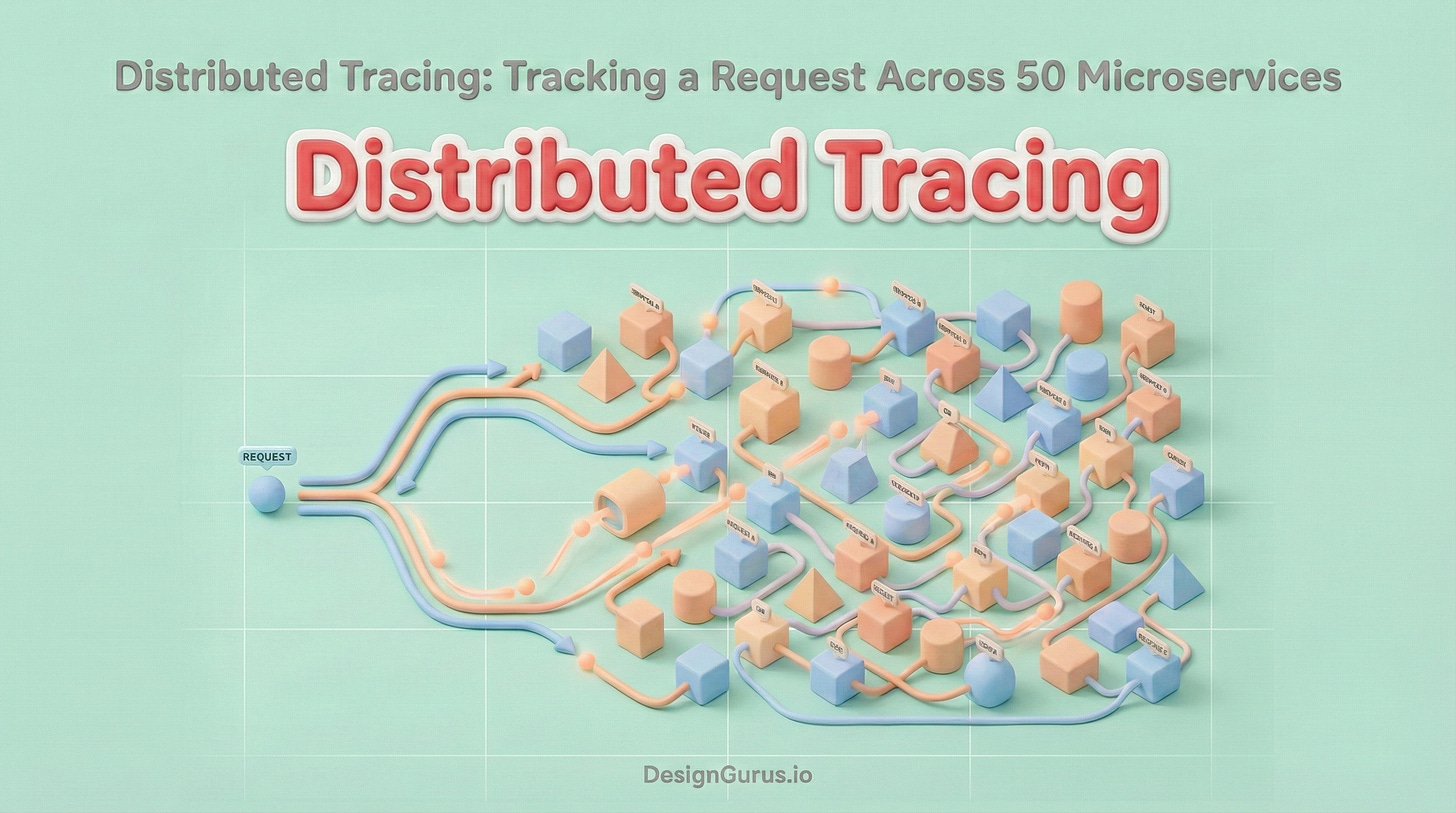

When a user triggers an action in a microservices architecture, that single request might initiate a complex chain reaction.

The initial request might be received by an API Gateway, which forwards it to an Authentication Service. Upon success, the request might fan out to an Inventory Service, a Pricing Service, and a User Profile Service.

These services might then make their own downstream calls to databases, caching layers, or third-party payment providers.

If this entire operation takes five seconds to complete, determining the cause of the delay is a difficult engineering challenge.

The latency could be caused by a slow database query in the thirtieth service in the chain, or it could be due to network congestion between the tenth and eleventh services.

Distributed tracing is the methodology designed to solve this specific problem. It provides the framework to track a request as it hops across network boundaries, reconstructing a coherent timeline of events from start to finish.

The Core Problem: Loss of Context

To understand the necessity of distributed tracing, one must first understand why standard logging is insufficient in a distributed environment. In a monolithic application, logs are sequential.

If you read the log file from top to bottom, you can see the story of a request unfolding.

In a distributed system, logs are isolated. Service A has its own log file, and Service B has its own.

When Service A calls Service B, there is no default link between the two. Service B might handle thousands of requests per second.

If you look at the logs for Service B, you will see thousands of entries, but you will have no way of knowing which specific entry corresponds to the request sent by Service A.

The network acts as a black box that strips away context.

When a request crosses the network, it loses its history. Service B does not know that Service A was called by the API Gateway, or that the user is waiting for a webpage to load.

Distributed tracing restores this context by attaching metadata to the request that persists across these boundaries.

Keep reading with a 7-day free trial

Subscribe to System Design Nuggets to keep reading this post and get 7 days of free access to the full post archives.